Click

to go to an example.

to go to an example.

| $Id: config.html 6753 2024-03-26 17:32:59Z JohnHenning $ | Latest: www.spec.org/cpu2017/Docs/ |

|---|

This document describes config files for SPEC CPU®2017, a product of the SPEC® non-profit corporation (about SPEC).

|

A. What is a config file? B. Benchmark selection C. Five consumers |

D. Config file syntax 1. Sections and scope 2. Comments 3. Whitespace 4. Quoting 5. Line continuation 6. Included files 7. Section markers E. Section merging |

F. Variables 1. Defining variables 2. $[square] substitution 3. Useful $[variables] 4. ${curly} interpolation 5. Useful ${variables} 6. Unsetting "%undef%" 7. Debug tips |

All variable types $(MAKEVAR)\$SHELLVAR $[startup] ${during_run}/$during_run %{macro} %{ENV_var} New with CPU 2017 |

II. Config file options for runcpu

A. Precedence: config file vs. runcpu command line

B. Options

action

allow_label_overrideNew

backup_config

basepeak

bind

check_version

command_add_redirect

copies

current_rangeNew

delay

deletework

difflines

enable_monitorNew

env_vars

expand_notes

expid

fail

fail_build

fail_run

feedback

flagsurl

force_monitorNew

http_proxy

http_timeout

idle_current_rangeNew

ignore_errors

ignore_sigint

info_wrap_columns

iterations

keeptmp

labelNew

line_width

locking

log_line_width

log_timestamp

mail_reports

mailcompress

mailmethod

mailport

mailserver

mailto

make

make_no_clobber

makeflags

mean_anyway

minimize_builddirs

minimize_rundirs

nobuild

no_input_handler

no_monitor

notes_wrap_columns

notes_wrap_indent

output_format

output_root

parallel_test

parallel_test_submit

parallel_test_workloadsNew

plain_train

powerNew

power_analyzerNew

preenv

rebuild

reportable

runlist

save_build_filesNew

section_specifier_fatal

setprocgroup

size

src.alt

strict_rundir_verify

sysinfo_program

table

teeout

temp_meterNew

threadsNew

train_single_threadNew

train_with

tune

use_submit_for_compareNew

use_submit_for_speed

verbose

verify_binariesNew

voltage_rangeNew

Power measurement was introduced as an experimental feature in CPU 2017 v1.0.

As of v1.1, more fields have been added, and the feature is now fully supported.

III. Config file options for specmake

|

A. Commonly used Make variables

CC, CXX, FC |

B. New with CPU 2017: Using OpenMP and/or Autopar 1. Summary 2. SPECrate: no OpenMP. No Autopar. 3. SPECspeed: your choice 4. Detail - conditions for enabling OpenMP |

C. Creating your own Make variables D. += is available but use with caution E. Using buildsetup to create a sandbox F. About Automatic Rebuilds |

|

{C|CXX|F}C_VERSION_OPTION New and required with CPU 2017 |

||

IV. Config file options for the shell

|

A. \$SHELLVAR variable substitution B. Shell Options bench_post_setup build_pre_bench build_post_bench fdo_make_clean_passN fdo_make_passN fdo_postN fdo_post_makeN fdo_pre0 fdo_preN fdo_pre_makeN fdo_runN monitor_X post_setup submit |

C. Using submit 1. Basic usage 2. Script generation 3. Quote traps 4. Debug tips 5. Maintainability 6. Reporting |

V. Config file options for human readers

VI. Using Feedback Directed Optimization (FDO)

|

A. Minimum required: PASSn or fdo_ B. Flexible build models C. The config file feedback option D. runcpu --feedback |

VII. The config file preprocessor

|

A. Introduction B. Preprocessor Syntax Basics 1. Column 1. Always Punch Column 1 2. One line per directive 3. Comments C. Macro Definition 1. Defining Macros 2. Undefining macros 3. Redefining macros |

D. Macro Usage E. Conditionals 1. %ifdef 2. %ifndef 3. %if and expression evaluation 4. %else 5. %elif 6. Preprocessor Example: Picking CPUs |

F. Informational Directives 1. %warning 2. %error 3. %info New with CPU 2017 4. %dumpmacros New with CPU 2017 G. Predefined macros and the environment New with CPU 2017 1. Automating output_root 2. Example: Adjusting the Environment |

VIII. Output files - and how they relate to your config file

|

A. Help, I've got too many config files! B. The log file 1. Useful Search Strings 2. About Temporary Debug Logs 3. Verbosity levels C. Deciphering an FDO log file |

D. Help, I've got too many log files! E. Finding the build directory F. Files in the build directory G. For more information |

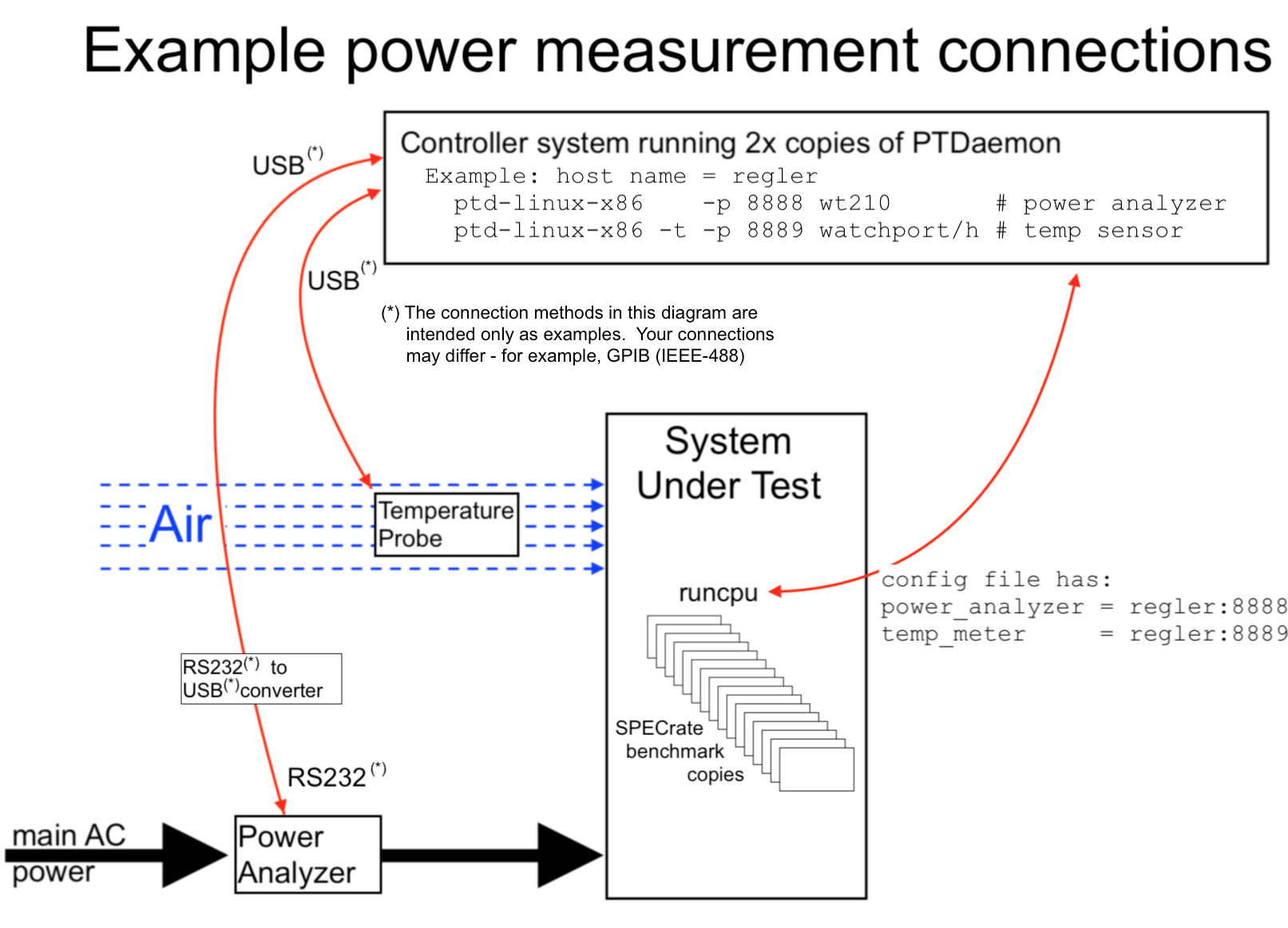

A. Getting started with power measurement

B. PTD examples

C. Descriptive fields for power and temperature

D. Current ranges

E. Power questions?

A. Auxiliary benchmark sets

B. Troubleshooting

C. Obsolete/removed items

allow_extension_override company_name ext hw_cpu_char hw_cpu_mhz hw_cpu_ncoresperchip hw_fpu mach machine_name max_active_compares rate speed sw_auto_parallel test_date tester_name VENDOR

I.A. What is a config file?A SPEC CPU config file is a file that defines how to build, run, and report on the SPEC CPU benchmarks in a particular environment. It defines how SPEC CPU 2017 interacts with your test environment, and lets you describe that environment in human-readable form. A config file provides detailed control of testing, including:

Using your customized options, the SPEC CPU tools automatically create Makefiles, build the benchmarks, run them, generate reports, and write log files. Because they collect your options into one place, config files are key to result reproducibility. For example, if a vendor publishes CPU 2017 results for the SuperHero Model 42 at the SPEC web site www.spec.org/cpu2017, it is expected that a customer can demonstrate similar performance using only 3 ingredients:

|

q. This document looks big and intimidating. Where do I start? a. Don't start here. Start with the Overview and Using SPEC CPU 2017 - the 'runcpu' Command. Afer that, please read section I.C carefully, which explains that config files contain options for five (5) different consumers. You need to recognize which options are for which consumers. Please notice that config files have 3 kinds of sections. You need to know how named sections work. From that point on, you can probably skip around among topics as they may interest you. Tip: Most topics can be found by adding '#topic' to the URL for this document. Examples: #consumers #shell #readers #sw_compiler #OpenMP |

In a config file, you can reference: One or more individual benchmarks, such as 500.perlbench_r, or entire suites, using the Short Tags in the table below.

| Short Tag |

Suite | Contents | Metrics | How many copies? What do Higher Scores Mean? |

| intspeed | SPECspeed® 2017 Integer | 10 integer benchmarks | SPECspeed®2017_int_base SPECspeed®2017_int_peak SPECspeed®2017_int_energy_base SPECspeed®2017_int_energy_peak |

SPECspeed suites always run one copy of each benchmark.

Higher scores indicate that less time is needed. |

| fpspeed | SPECspeed® 2017 Floating Point | 10 floating point benchmarks | SPECspeed®2017_fp_base SPECspeed®2017_fp_peak SPECspeed®2017_fp_energy_base SPECspeed®2017_fp_energy_peak |

|

| intrate | SPECrate® 2017 Integer | 10 integer benchmarks | SPECrate®2017_int_base SPECrate®2017_int_peak SPECrate®2017_int_energy_base SPECrate®2017_int_energy_peak |

SPECrate suites run multiple concurrent copies of

each benchmark.

The tester selects how many. Higher scores indicate more throughput (work per unit of time). |

| fprate | SPECrate® 2017 Floating Point | 13 floating point benchmarks | SPECrate®2017_fp_base SPECrate®2017_fp_peak SPECrate®2017_fp_energy_base SPECrate®2017_fp_energy_peak |

|

|

The "Short Tag" is the canonical abbreviation for use with runcpu, where context

is defined by the tools. In a published document, context may not be clear.

To avoid ambiguity in published documents, the Suite Name or the Metrics should be spelled as shown above. |

||||

(Other benchmarks sets are available, but must be used with great caution. They are described in Appendix A.)

A config file has content for five (5) distinct consumers, as shown in the table.

To understand a config file, you must understand which consumer is addressed at any given

point.

Column 3 below provides a few examples for each; click the roman numerals in column 2 for many more.

| Consumer | List of options |

Examples | Brief description |

| runcpu | II |

copies

output_format reportable threads |

Options that change how runcpu itself works. Many should be familiar from Using SPEC CPU 2017. output_format = text,csv tune = base reportable = yes runlist = fpspeed then the defaults for the runcpu command would change as shown. Both of these would do the same thing: runcpu --config=michael runcpu --config=michael --output=text,csv --tune=base --reportable fpspeed |

|---|---|---|---|

| specmake | III |

OPTIMIZE

PORTABILITY |

Make variables, to control benchmark builds via specmake.

Commonly used specmake variables: section III

|

| shell (or cmd.exe) |

IV |

fdo_post1

fdo_run1 post_setup submit |

Commands to be executed by the Unix shell (/bin/sh) or the Windows command interpreter

(cmd.exe).

fdo_pre0 = rm -Rf /tmp/manmohan/feedback fdo_pre1 = mkdir /tmp/manmohan/feedback Above, a directory (and all its contents) are deleted, and a new one created, prior to a training run for feedback-directed optimization.

Warning: SPEC CPU config files can execute arbitrary

shell commands.

|

| readers | V | hw_model

notes100 sw_compiler |

System Under Test (SUT) description, with enough detail so that the reader can understand what was tested and can reproduce your results. If a SPEC CPU 2017 result is published (whether at SPEC or independently) it must be fully described. |

| preprocessor | VII | %define

%ifdef |

Preprocessing directives and definitions to adjust your config file prior to use. All preprocessor directives begin in column 1. Example: %if %{bits} == 64

% define model -m64

%else

% define model -m32

%endif

CC = gcc %{model}

|

A config file contains: a header section, named sections, and a HASH section.

Scope: Every line is considered to be within the scope of one of these three. Lines prior to the first section marker are in the scope of the header section. All other lines are either in the scope of the most recently preceding section marker or in the HASH section.

| Sections | Description | Example |

| header section |

The header section is the first section, prior to the any named section. Most attempts to address runcpu itself are done in the header section. In the example, lines 1 through 6 are in the header section. |

$ cat -n threeSections.cfg

1 flagsurl = $[top]/config/flags/gcc.xml

2 iterations = 1

3 output_format = text

4 runlist = 519.lbm_r

5 size = test

6 tune = peak

7 fprate=peak:

8 CC_VERSION_OPTION = -v

9 CC = gcc

10 OPTIMIZE = -O2

11 519.lbm_r=peak:

12 OPTIMIZE = -O3

13

14 __HASH__

15 519.lbm_r=peak=none:

16 # Last updated 2017-02-06 14:29:40

17 opthash=ff6059d6d9ec9577f7f49d05178c58688f31004089

18 baggage=

19 compiler_version=\

20 @eNo1jbEOgjAYhPc+RUcdaFEJIWwGHEhQjBLjRn7LLzapLWkL8

21 kpP9OCqkdX070hmtk0bTjMUspiuhQA9RFgfDki3brEkLdkCf0y

22 0NO36VHldDROLqTSzoNS2JfS5pT/DqUAH54cv4tAsjDEC6M9au

23 FuH/CZ+c5Q+pyRd+tUlX

24 compile_options=\

25 @eNp1T11PgzAUfe+vaPrOMjNfJGMJlLqhQBtLX3xptHYGBWoKM

26 vNp93Vjs3obadX2I+sHXZtD+0D3VXr9bX+8/InJBEMAeKBFZLq

27 KgWj06epUADLlCVqe+rFquJaKiHumJSaC1YWAuOAr/DPWvfu4I

28 mALF6zzeSpj96+RITFhJd3rG/dMaQTzEIJYV2T0DJl8RlGfl7Z

29 hjyd8vw+D9MirnY6z5LRW9NOC1yNkc/OfAJtxnuD

30 exehash=5290fe504238c6de1a13e275ab8ca11e035fbb4e7e

31

$

|

|---|---|---|

| or default: |

Options for the header section may also be entered in section default=default=default: or a shorter spelling thereof, such as default: This can be helpful if you need to effectively return to the header section, perhaps when using include. Tip: Nevertheless, it is usually easier to maintain a config files that keeps all runcpu options near the top, preferably in alphabetical order. |

|

| Named sections |

A named section is a portion of the config file that follows a line that contains a section marker. Briefly, a section marker is a 1-to-3 part string with equals signs in the middle and a colon at the end; see detail below. The example has 2 named sections, delimited by 2 section markers on lines 7 and 11. Notice that the example sets OPTIMIZE in both of the named sections. To understand which one gets used, see the precedence rules, which describe how sections interact. |

|

| HASH section |

The HASH Section is the final, automatically-generated section. It starts with __HASH__ and contains checksums. The example starts the HASH section at line 14. (For readability, the lines are truncated on the right.) The automatically-updated checksums ensure that binaries match their config file. You can optionally disable checking, but doing so is strongly discouraged. See verify_binaries. Config files printed by --output_format=config do not include the HASH section. |

Comments begin with '#'. There are two types:

| Syntax | Type | Saved? | Detail |

|---|---|---|---|

| # | Regular | Yes | Regular comments can be full-line or trailing. A copy of your config file is saved with the test results. If you submit your results to SPEC for publication, the regular comments can be read by anyone. |

| #> | Protected | No | A full-line comment that begins with #> is a protected comment and will not be saved with your results. You can use protected comments for proprietary information, for example: #> I didn't use the C++ beta because of Bob's big back-end bug. |

Both types of comments are ignored when processing the config file.

Full-line comments: If # is the first non-blank item on a line, then the whole line is a comment. Comment lines can be placed anywhere in a config file.

Trailing comments: If a line has non-blank items, you can (usually) add regular comments. You cannot write a protected trailing comment. If you try to use a protected comment after some other element, it is treated as a regular comment.

All comments below will be saved except the one that says 'NOT saved'.

# New optimizers.

default=base: # Most optimizers go up to ten.

OPTIMIZE = -O11 # These go to eleven.

#> This comment is NOT saved

COPTIMIZE = -std #> This comment is saved

Not a comment: These instances of # do not start a comment:

\# To use a # without starting a comment, put a backslash in front of it:

hw_model = Mr. Lee's \#1 Semi-Autonomous Unit

notesN...# Config file notes are printed in reports as entered, including any instances of #

#notes100 = This note will not be printed, because # was the first item on the line.T notes110 = This note will be printed in reports. All of it. # Even this part.

Blank lines can be placed anywhere in a config file. They are ignored.

Spaces at the beginning of lines are ignored, with the exception that preprocessor directives always begin with a percent sign (%) in the first column. You can put spaces after the percent sign, if you wish (example).

Spaces within a line are usually ignored. Don't try to break up a section marker, and you can't break up a single word (say 'OPTIMIZE' not 'OPT I MIZE'). If multiple spaces separate line elements, it is as if there were only one space. Each of these have the same meaning:

OPTIMIZE=-O2 -noalias OPTIMIZE = -O2 -noalias

One place where spaces are considered significant is in notes, where you can use spaces to line up your comments. Notes are printed in a fixed-width font.

Trailing spaces and tabs are ignored, unless they are preceded by a backslash. For example, if space.cfg contains:

$ cat trailing_space.cfg PATH1 = /path/without/any/trailer FC = $(PATH1)/f90 PATH2 = /path/with/trailing/space\ CC = $(PATH2)/cc $

then we can use fake to demonstrate the compile commands that would be generated (+ Unix commands grep, head, and cut to pick out one example).

$ cat trailing_space.sh runcpu -c space --fake 527.cam4 | grep without/ | head -1 | cut -b 1-80 runcpu -c space --fake 527.cam4 | grep trailing | head -1 | cut -b 1-80 $ $ ./trailing_space.sh /path/without/any/trailer/f90 -c -o ESMF_BaseMod.fppized.o -I. -Iinclude -Inetcd /path/with/trailing/space /cc -c -o GPTLget_memusage.o -DSPEC -DSPEC_CPU -DNDEBU $ (Notes about examples)

Notice that the PATH2 trailing space is preserved

If you use double (") or single (') quotes within a config file, runcpu leaves them alone. The assumption is that you put them there because one of the consumers (such as a shell) needs them. The quotes are not significant to runcpu but may be highly significant to the consumer. See the section on quote traps.

If you use a backslash (\) it is usually not significant. The exceptions are:

Many fields, including most reader fields, can be continued by adding a number:

sw_os1 = Turboblaster OS V1.0 sw_os2 = (Tested with Early Hardware Release 0.99 sw_os3 = and Patch 42.) hw_disk105 = 42 TB on 6x 8 TB 10K RPM SAS Disks hw_disk110 = arranged as 4x 2-way mirrors; plus hw_disk115 = Turboblaster Disk Accelerator

The fields which cannot be continued are the ones that are expecting a simple integer, such as hw_nchips and license_num; and the ones that expect a date, such as hw_avail. You can pick your own style of numbering, as in the examples above. (Note: the stored results from your test always use three-digit numbers, and have slightly different syntax, as discussed in utility.html.)

Shell-style "here documents" with double angle brackets and a delimiter word (e.g. <<EOT) can be used to set multi-line values. Backslash-continued lines are also supported. For example:

$ cat continued_lines.cfg

expand_notes = 1

output_format = text

output_root = /tmp/fake_lines

runlist = 519.lbm_r

here_continued = <<EOT

+ This is +

+ a test +

EOT

backslash_continued = + So is +\

+ this +

notes1 = ${here_continued}

notes2 = ${backslash_continued}

$ cat continued_lines.sh

runcpu --config=continued_lines --fakereport | grep txt

grep '+' /tmp/fake_lines/result/CPU2017*txt

$ ./continued_lines.sh

format: Text -> /tmp/fake_lines/result/CPU2017.001.fprate.refrate.txt

+ This is +

+ a test +

+ So is +

+ this +

$ (Notes about examples)

You can include other files in your config file using include:

Multiple files may be included.

Included files may use macros (and you can use configpp to check the effect).

Included files may write to arbitrary sections, including

(effectively) the header section.

Example: a config file is developed on one system, and applied on a different System Under Test (SUT). The compilers are, of course, installed on the development system. They might not be present on the SUT.

|

The compiler is described in the main config file on lines 10-12.

$ cat -n include.cfg 1 iterations = 1 2 output_format = text 3 output_root = /tmp/example 4 runlist = 519.lbm_r 5 size = test 6 include: SUT.inc 7 default: 8 CC = gcc 9 CC_VERSION_OPTION = -V 10 sw_compiler001 = C/C++/Fortran: Version 6.2.0 of GCC 11 sw_compiler002 = the GNU Compiler Collection 12 sw_avail = Aug-2016 $ Note the software date (sw_avail) on line 12 above.

$ cp Turboblaster.inc SUT.inc $ cat Turboblaster.inc default: hw_model = SuperHero IV hw_avail = Feb-2018 hw_vendor = Turboblaster $ Note the hardware date (hw_avail) in the include file.

$ cat include.sh runcpu --config=include | grep txt grep avail /tmp/example/result/*txt $ ./include.sh format: Text -> /tmp/example/result/CPU2017.001.fprate.test.txt Test sponsor: Turboblaster Hardware availability: Feb-2018 Tested by: Turboblaster Software availability: Aug-2016 $ (Notes about examples) |

Notes on the Examples Many of the examples that begin with cat somefile are on your installed copy of SPEC CPU 2017, in directory $SPEC/config/tiny-examples or %SPEC%\config\tiny-examples. cat example.cfg Display config file. Windows users can use type. cat example.sh For config files with many lines of output, a corresponding .sh script file has commands to pick out a subset. Windows users could create a .bat file with call runcpu. cat -n Add line numbers. CC_VERSION_OPTION How to say to the compiler "Please tell me your version". A required option for all except fake runs. (detail) cp Copy a file. Windows users could say copy. cut, head, tail Various ways to subset output. default=base: A section marker --fake Many examples use --fake or --fakereportable, to quickly provide a dry run demonstration. You can try fake examples without even installing a compiler. iterations=1 Demonstrate using just one repetition. Warning: Reportable runs use at least 2. grep Search the output. Windows users could try findstr ls List files. Windows users could say dir. output_root Send results to named directory. runlist Often, 519.lbm_r is demonstrated because it compiles quickly. size=test For demonstration purposes, use the very short workload. Warning: The intent of 'test' is just a sanity check that a binary works. It is not a comparable metric. If you publish results, you must use the 'ref' workloads. For other fields, see table of contents. Most examples were tested with a SPEC CPU 2017 release candidate and this document contains their actual output, except: white space was liberally edited and (in a very few cases) line width was reduced by chopping out words. |

A named section begins with a section marker and continues until the next section marker or the HASH section is

reached.

Named sections can be entered in any order.

Section markers can be repeated. Material from repeated sections is automatically consolidated.

A section marker is a one- to three-part string of the form:

benchmark[,...]=tuning[,...]=label[,...]:

The three parts of a section marker are called the section specifiers, with allowed values:

| Section specifier | Allowed values |

|---|---|

| benchmark | default

A metric: intrate, intspeed, fprate, or fpspeed Any individual benchmark, such as 503.bwaves_r A list, such as: 503.bwaves_r,603.bwaves_s |

| tuning | default

base peak A list of tuning levels: base,peak |

| label | default

Any specific label: an arbitrary tag to identify binaries and directories A list of labels, separated by commas |

Trailing default section specifiers may be omitted from a section marker. In the pairs below, in each case, the second line is equivalent to the first:

intrate=default=default: 628.pop2_s=base=default: default=default=default: intrate: 628.pop2_s=base: default:

By constructing section markers, you determine how you would like your options applied. Benchmarks are built according

to instructions in the sections that they match, subject to rules for combining sections and resolving conflicts among them.

Sections are combined using these rules.

Click  to go to an example.

to go to an example.

For the benchmark specifier, the precedence is:

For the benchmark specifier, the precedence is:

highest named benchmark(s)

suite name

lowest default

(See also Appendix A)

For the tuning specifier, base

or peak has higher precedence than default.

For the tuning specifier, base

or peak has higher precedence than default.  For the label specifier, any named label

is at a higher precedence level than default.

For the label specifier, any named label

is at a higher precedence level than default.| Precedence Example 1: benchmark specifiers | |

|

For the benchmark specifier, the precedence is: highest named benchmark(s)

suite name

lowest default

The flagsurl line picks up definitions that are provided with SPEC CPU 2017. 519.lbm_r is an fprate benchmark, but it does not use the setting on lines 14-15. Instead, it uses the higher precedence lines 10-11 (named benchmark). 619.lbm_s is an fpspeed benchmark. The highest precedence section for it is on lines 12-13. 505.mcf_r is an intrate benchmark. It gets the low-precedence OPTIMIZE setting from lines 16-17. |

$ cat -n precedence_example1.cfg

1 flagsurl = $[top]/config/flags/gcc.xml

2 iterations = 1

3 output_format = text

4 output_root = /tmp/ptest

5 runlist = 519.lbm_r,619.lbm_s,505.mcf_r

6 size = test

7 default:

8 CC_VERSION_OPTION = -v

9 CC = gcc

10 519.lbm_r:

11 OPTIMIZE = -O3

12 fpspeed:

13 OPTIMIZE = -O2

14 fprate:

15 OPTIMIZE = -O1

16 default:

17 OPTIMIZE = -O0

$ cat -n precedence_example1.sh

1 runcpu --config=precedence_example1 | grep txt

2 cd /tmp/ptest/result

3 grep 'O[0-9]' *txt

$ ./precedence_example1.sh

format: Text -> /tmp/ptest/result/CPU2017.001.fprate.test.txt

format: Text -> /tmp/ptest/result/CPU2017.001.fpspeed.test.txt

format: Text -> /tmp/ptest/result/CPU2017.001.intrate.test.txt

CPU2017.001.fprate.test.txt: 519.lbm_r: -O3

CPU2017.001.fpspeed.test.txt: 619.lbm_s: -O2

CPU2017.001.intrate.test.txt: 505.mcf_r: -O0

$ (Notes about examples)

|

| Precedence Example 2: tuning specifiers | |

|

For the tuning specifier, base or peak has higher precedence than default. The first few lines of the config file use similar features as the previous example. The tuning from line 12 is used for base, and line 14 for peak. |

$ cat -n precedence_example2.cfg

1 flagsurl = $[top]/config/flags/gcc.xml

2 iterations = 1

3 output_format = text

4 output_root = /tmp/ptest2

5 runlist = 519.lbm_r

6 size = test

7 default=default:

8 CC_VERSION_OPTION = -v

9 CC = gcc

10 OPTIMIZE = -O0

11 default=base:

12 OPTIMIZE = -O1

13 default=peak:

14 OPTIMIZE = -O3

$ cat precedence_example2.sh

runcpu --config=precedence_example2 --tune=base | grep txt

runcpu --config=precedence_example2 --tune=peak | grep txt

cd /tmp/ptest2/result

grep 'O[0-9]' *txt

$ ./precedence_example2.sh

format: Text -> /tmp/ptest2/result/CPU2017.001.fprate.test.txt

format: Text -> /tmp/ptest2/result/CPU2017.002.fprate.test.txt

CPU2017.001.fprate.test.txt: 519.lbm_r: -O1

CPU2017.002.fprate.test.txt: 519.lbm_r: -O3

$ (Notes about examples)

|

| Precedence Example 3: label specifiers | |

|

For the label specifier, any named label has higher precedence than the default. This config file is simpler than the previous examples, because we don't even bother to run it; instead, --fake is used. The runcpu command uses --label=OhTwo. Therefore, the default setting for OPTIMIZE on line 6 is over-ridden by the settings on lines 7-8. |

$ cat -n precedence_example3.cfg 1 runlist = 619.lbm_s 2 size = test 3 fpspeed=base=default: 4 CC_VERSION_OPTION = -v 5 CC = gcc 6 OPTIMIZE = -O0 7 fpspeed=base=OhTwo: 8 OPTIMIZE = -O2 $ cat precedence_example3.sh runcpu --config=precedence_example3 --fake --label=OhTwo | grep lbm.c $ ./precedence_example3.sh gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DLARGE_WORKLOAD -O2 lbm.c $ (Notes about examples) |

| Precedence Example 4: Combining sections | |

|

Combine sections that apply to a benchmark, if there is no conflict among them. Note that line 1 sets the label, and line 3 sets the tuning. All sections -- including lines 6, 8, 10, and 12 -- contribute to the compile command, which has been wrapped for readability. |

$ cat -n precedence_example4.cfg

1 label = wall

2 runlist = 619.lbm_s

3 tune = peak

4 default:

5 CC_VERSION_OPTION = -v

6 CC = gcc

7 fpspeed:

8 OPTIMIZE = -O1

9 default=peak:

10 COPTIMIZE = -ftree-vectorize

11 default=default=wall:

12 EXTRA_COPTIMIZE = -Wall

$ cat precedence_example4.sh

runcpu --config=precedence_example4 --fake | grep lbm.c

$ ./precedence_example4.sh

gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DLARGE_WORKLOAD

-O1 -ftree-vectorize -Wall lbm.c

$ (Notes about examples)

|

| Precedence Example 5: Conflicting sections | |

|

If sections conflict with each other, the order of precedence is: highest benchmark

tuning

lowest label

The first runcpu command includes --label=wall. It uses the OPTIMIZE setting from lines 13-14, which have higher precedence than the default (lines 15-16). The second runcpu command includes both --label=wall and --tune peak. The OPTIMIZE setting from lines 11-12 is used. The third also uses --label=wall --tune peak; and runs 619.lbm_s, which is an fpspeed benchmark. The OPTIMIZE setting from lines 9-10 is used. |

$ cat -n precedence_example5.cfg 1 flagsurl = $[top]/config/flags/gcc.xml 2 iterations = 1 3 output_format = text 4 output_root = /tmp/ptest 5 size = test 6 default: 7 CC_VERSION_OPTION = -v 8 CC = gcc 9 fpspeed: 10 OPTIMIZE = -O3 11 default=peak: 12 OPTIMIZE = -O2 13 default=default=wall: 14 OPTIMIZE = -O1 15 default: 16 OPTIMIZE = -O0 $ cat precedence_example5.sh runcpu --fake --config=precedence_example5 --label=wall 519.lbm | grep lbm.c runcpu --fake --config=precedence_example5 --label=wall -T peak 519.lbm | grep lbm.c runcpu --fake --config=precedence_example5 --label=wall -T peak 619.lbm | grep lbm.c $ ./precedence_example5.sh gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DSPEC_AUTO_SUPPRESS_OPENMP -O1 lbm.c gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DSPEC_AUTO_SUPPRESS_OPENMP -O2 lbm.c gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DLARGE_WORKLOAD -O3 lbm.c $ (Notes about examples) |

| Precedence Example 6: Section order |

For sections at differing precedence levels, order does not matter. These two config files use a different order but perform the same functions when runcpu applies them.. $ diff --side-by-side precedence_example6a.cfg precedence_example6b.cfg

iterations = 1 iterations = 1

output_format = text output_format = text

output_root = /tmp/ptest output_root = /tmp/ptest

runlist = 519.lbm_r runlist = 519.lbm_r

size = test size = test

default: default:

CC_VERSION_OPTION = -v CC_VERSION_OPTION = -v

CC = gcc CC = gcc

519.lbm_r: <

OPTIMIZE = -O3 <

fprate: <

OPTIMIZE = -O1 <

default: default:

OPTIMIZE = -O0 OPTIMIZE = -O0

> fprate:

> OPTIMIZE = -O1

> 519.lbm_r:

> OPTIMIZE = -O3

$ cat precedence_example6.sh

runcpu --fake --config=precedence_example6a | grep lbm.c

runcpu --fake --config=precedence_example6b | grep lbm.c

$ chmod +x precedence_example6.sh

$ ./precedence_example6.sh

gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DSPEC_AUTO_SUPPRESS_OPENMP -O3 lbm.c

gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DSPEC_AUTO_SUPPRESS_OPENMP -O3 lbm.c

$ (Notes about examples)

|

| Precedence Example 7: Repeated Sections | |

|

If a section occurs more than once, the settings are combined. If there are conflicts, the last instance wins. Recall that trailing default specifiers can be dropped. Therefore, these three section markers: 7 fpspeed: 12 fpspeed=default: 17 fpspeed=default=default: actually name the same section, which is entered three times. The EXTRA_CFLAGS, COPTIMIZE, and EXTRA_COPTIMIZE settings (lines 9, 14, 19) are combined. The OPTIMIZE settings on lines 8, 13, and 18 conflict with each other. Only the last one is retained. |

$ cat -n precedence_example7.cfg

1 label = wall

2 runlist = 619.lbm_s

3 tune = peak

4 default:

5 CC_VERSION_OPTION = -v

6 CC = gcc

7 fpspeed:

8 OPTIMIZE = -O1

9 EXTRA_CFLAGS = -finline-functions

10 intrate:

11 OPTIMIZE = -O0

12 fpspeed=default:

13 OPTIMIZE = -O2

14 COPTIMIZE = -ftree-vectorize

15 intrate=peak:

16 OPTIMIZE = -O0

17 fpspeed=default=default:

18 OPTIMIZE = -O3

19 EXTRA_COPTIMIZE = -Wall

$ cat precedence_example7.sh

runcpu --config=precedence_example7 --fake | grep lbm.c

$ ./precedence_example7.sh

gcc -c -o lbm.o -DSPEC -DSPEC_CPU -DNDEBUG -DLARGE_WORKLOAD

-O3 -ftree-vectorize -finline-functions -Wall lbm.c

$ (Notes about examples)

|

|

You can do variable substitution within a config file.

|

q. Wait a minute... all these choices for substitution? Which do I want? a. Probably either the first in the list: specmake; or the last: the preprocessor. |

| Format | Example | Description + consumer | Traps for the unwary |

| $(round) | $(COMPILER_DIR) | Make variables, interpreted by specmake | |

|---|---|---|---|

| \$SHELLVAR | \$SPEC | Shell or command interpreter variables. | Quoting |

| $[square] | $[top]/config | Unchanging items, substituted by runcpu at startup. | Timing |

| ${curly}

$unbracketed |

${hw_avail}ish

$hw_avail |

Changeable items subject to perl interpolation.

$unbracketed is allowed if it is not ambiguous. |

Try not to confuse with %{curly} |

| %{ENV_var} | %{ENV_LIBRARY_PATH} | Predefined macros for environment variables, handled by the preprocessor. | |

| %{curly} | %{bits} | macros, handled by the preprocessor | Try not to confuse with ${curly} |

You can create your own runcpu variables using a line of the form

name = value

The name may contain only letters, digits, and underscores (a hyphen is NOT allowed).

Start with a letter.

You may indent your definitions if you wish (see: whitespace)

Exception: preprocessor macros are different on all of the above. Hyphens are allowed, use

%define name value

and the % must appear in column 1. You can indent after the % if you wish.

Conventions: Although not required, certain conventions are usually followed:

Examples of the above (in the same order)

%ifndef processorNumaControl

% define processorNumaControl firstTouch # macro

%endif

default:

my_submit_cmd = numactl -C $BIND # runcpu variable

MYTUNE = -O2 --math=SIMD # make variable

ENV_LD_LIBRARY_PATH = /opt/lib # environment variable

The remainder of this section I.F is about runcpu variables -- the $[square] and ${curly} rows from the table at the top.

Immediately after preprocessing, variables that are delimited by $[square

brackets] are substituted.

Any value set in the config file can be substituted, provided that is visible in the

current scope.

You can access the value of additional variables that you may have created.

See the list of useful variables below.

Perhaps the most useful is $[top] for the top of the SPEC CPU 2017 tree,

often found in contexts such as:

flagsurl01 = $[top]/config/compiler.xml

flagsurl02 = $[top]/config/platform.xml

EXTRA_LIBS = $[top]/mylibs

preENV_LIBRARY_PATH = $[top]/lib64:$[top]/lib

Traps for the unwary: In some cases it may be obvious to the human which value to use, but the tools aren't as smart as you.

output_root:

You cannot set an output_root that depends on a runcpu variable.

You can set one that uses a macro:

output_root=$[top]/my/path # wrong output_root=${top}/my/path # wrong output_root=%{ENV_SPEC}/my/path # right

Square substitution is done early. That comes in handy if you need a variable right away, for example, in order to use it with preENV.

$ cat EarlySub.cfg SW_DIR = /opt/path/to/compilers preENV = 1 preENV_LD_LIBRARY_PATH = $[SW_DIR]/lib $ cat EarlySub.sh runcpu --config=EarlySub --fake 519.lbm_r | grep '^LD' | uniq $ ./EarlySub.sh LD_LIBRARY_PATH=/opt/path/to/compilers/lib $ (Notes about examples)

The example below uses variables defined in several named sections. The sections are delimited by section markers default: (line 8), default=base: (line 11), and default=peak: (line 15).

$ cat -n square.cfg

1 expand_notes = 1

2 iterations = 1

3 output_format = text

4 output_root = /tmp/square

5 runlist = 519.lbm_r

6 size = test

7 tune = base,peak

8 default:

9 CC = gcc

10 CC_VERSION_OPTION = -v

11 default=base:

12 the_system = STAR

13 OPTIMIZE = -O1

14 notes_base_100 = base tuning uses '$[CC]' '$[OPTIMIZE]' on system '$[the_system]'

15 default=peak:

16 OPTIMIZE = -O2

17 notes_peak_100 = peak tuning uses '$[CC]' '$[OPTIMIZE]' on system '$[the_system]'

18

$ cat square.sh

runcpu --config=square | grep txt

grep tuning /tmp/square/result/CPU2017.001.fprate.test.txt

$ ./square.sh

format: Text -> /tmp/square/result/CPU2017.001.fprate.test.txt

base tuning uses 'gcc' '-O1' on system 'STAR'

peak tuning uses 'gcc' '-O2' on system ''

$ (Notes about examples)

Note that line 14 finds all three variables that it is looking for, but line 17 does not. If it is not clear why this happens, please see the descriptions of named sections and precedence above.

Useful $[variables] include:

| $[configfile] | Your config file name |

|---|---|

| $[configpath] | The full path to your config file |

| $[dirprot] | protection that is applied to directories created by runcpu |

| $[endian] | 4321 or 87654321 for big endian; 1234 or 12345678 for little |

| $[flag_url_base] | directory where flags files are looked up |

| $[OS] | unix or windows |

| $[os_exe_ext] | exe for windows, nil elsewhere |

| $[realuser] | the user name according to the OS |

| $[top] | the top directory of your installed SPEC CPU 2017 tree |

| $[username] | the username for purposes of tagging run directories |

| $[uid] | the numeric user id |

You can access the initial value of most options that you can enter into a config file, including:

| During a run, variables that are delimited by ${curly brackets} are substituted: | ${command} |

| Usually, variables can be spelled with or without the curlies: | $command or ${command} |

| Exception 1: curlies are required if the variable is adjacent to other text | ${command}s |

| Exception 2: curlies are not allowed for: | $BIND and $SPECCOPYNUM |

Runcpu uses perl interpolation.

Only scalars (not: perl arrays and hashes) can be interpolated.

Example: on the notes100 line, you could optionally add say either ${lognum} or $lognum, but don't try taking the curly brackets away from ${size}.

$ cat just.cfg

expand_notes = 1

notes100 = Just ${size}ing, in run $lognum

output_root = /tmp/just

runlist = 505.mcf_r

size = test

CC = gcc

CC_VERSION_OPTION = -v

$

$ cat just.sh

runcpu -c just | grep txt

grep Just /tmp/just/result/CPU2017.001.intrate.test.txt

$

$ ./just.sh

format: Text -> /tmp/just/result/CPU2017.001.intrate.test.txt

Just testing, in run 001

$ (Notes about examples)

Traps for the unwary

Timing: Some variables are only defined at certain times, and a line that uses it might be interpolated at a different time. Therefore interpolation won't always do what you might wish. In particular, notes are not expanded in the context of a particular benchmark run, and therefore variables such as ${tune} are not useful within them.

output_root:

You cannot set an output_root that depends on a runcpu variable.

You can set one that uses a macro:

output_root=$[top]/my/path # wrong output_root=${top}/my/path # wrong output_root=%{ENV_SPEC}/my/path # right

These variables may be of interest:

| ${baseexe} | The first part of the executable name, which is <baseexe>_<tune>.<label>. For example, in "lbm_r_base.foo", baseexe is "lbm_r". |

|---|---|

| ${benchmark} | The number and name of the benchmark currently being run, for example 519.lbm_r |

| ${benchname} | The name of the benchmark currently being run, for example lbm_r |

| ${benchnum} | The number of the benchmark currently being run, for example 519 |

| ${benchtop} | The top directory for the benchmark currently being run, for example /spec/cpu2017/benchspec/CPU/519.lbm_r |

| $BIND | A value from your bind list, typically a numeric identifier for a processor on your system. This variable is actually interpreted by specinvoke, and cannot be spelled with braces. Say $BIND, do not say ${BIND}. |

| ${command} | The shell command line to run the current benchmark, for example ../run_base_test_sticky.0000/lbm_r_base.sticky 20 reference.dat 0 1 100_100_130_cf_a.of |

| ${commandexe} | The executable for the current command, for example ../run_base_test_none.0000/lbm_r_base.sticky |

| ${label} | The label for the benchmark being run |

| ${iter} | The current iteration number |

| ${logname} | The complete log file name, for example /spec/cpu2017/result/CPU2017.168.log |

| ${lognum} | The log file number, for example 168 |

| $SPECCOPYNUM | The current copy number, when running a SPECrate run. This variable is actually interpreted by specinvoke, and cannot be spelled with braces. Say $SPECCOPYNUM, do not say ${SPECCOPYNUM}. The first copy is 0 (zero). |

| SPECUSERNUM | Do not use. This is the older, obsolete, CPU 2000 spelling for what is now called SPECCOPYNUM. If you use it, it will be silently ignored - no warning is printed. |

| ${tune} | The tuning for the benchmark being run (base or peak) |

| ${workload} | The current workload number (within the iteration) |

For a complete list of the available variables relative to the current config file, set

expand_notes = 1 verbose = 35 # or higher

Then, do a run that causes a command substitution to happen.

In the log, you will find many lines of the

form:

- Variables available for interpolation that have changed since the last list:

(From config) $runmode = "rate"

(From config) $size = "test"

- Variables available for interpolation that have changed since the last list:

(From config) $size = "train"

It is sometimes useful to undo the setting of a variable that would otherwise be included from another section. This can be accomplished using the special value %undef%. In the example, line 14 undefines COPTIMIZE when compiling peak:

$ cat -n gnana.cfg

1 flagsurl = $[top]/config/flags/gcc.xml

2 iterations = 1

3 output_format = text

4 output_root = /tmp/undef

5 runlist = 519.lbm_r

6 size = test

7 tune = base,peak

8 default:

9 CC_VERSION_OPTION = -v

10 CC = gcc

11 OPTIMIZE = -O2

12 COPTIMIZE = -fno-tree-pre

13 519.lbm_r=peak:

14 COPTIMIZE = %undef%

15

$ runcpu --config=gnana | grep txt

format: Text -> /tmp/undef/result/CPU2017.001.fprate.test.txt

$ cd /tmp/undef/benchspec/CPU/519.lbm_r/build

$ grep OPTIMIZE build_base_none.0000/Makefile.spec

COPTIMIZE = -fno-tree-pre

OPTIMIZE = -O2

$ grep OPTIMIZE build_peak_none.0000/Makefile.spec

COPTIMIZE =

OPTIMIZE = -O2

$ (Notes about examples)

Notice that in the build directory, COPTIMIZE is present for base and absent for peak.

When debugging a config file that uses runcpu variables, try:

iterations = 1 minimize_rundirs = 0 reportable = 0 runlist = (one or two benchmarks) size = test verbose = 40

Using --fake will probably be informative. Look inside the log for the (case-sensitive) word 'From'.

This section documents options that control the operation of runcpu itself.

In the list that follows, some items are linked to the document Using SPEC CPU 2017 - the 'runcpu' Command because they can be specified either in a config file, or on the runcpu command line.

New with CPU 2017, If an option is specified in both places, the command line wins.

In the table that follows, if an option is documented as accepting the values "no" and "yes", these may also be specified as "false" and "true", or as "0" and "1".

The "Use In" column indicates where the option can be used:

| H | use only in header section |

| N | use in a named section. |

| H,N | can be used in both the header section and in named sections. The item can therefore be applied on a global basis, and/or can be applied to individual benchmarks. |

| Option | Use In | Default | Meaning |

| action | H | validate | What to do. The available actions are defined in the runcpu

document. See also the buildsetup example in the section on specmake. |

| allow_label_override | H | no | The runcpu command can use --label to select sections that apply to a specific label. If the label mentioned on the runcpu command does not occur in any section marker:

|

| backup_config | H | yes | When updating the hashes in the config file, make a backup copy first. Highly recommended to defend against full-file-system errors, system crashes, or other unfortunate events. |

| Option | Use In | Default | Meaning |

| basepeak | H,N | no | Roughly: Report base results for peak. The more precise meaning depends on context: Config file header section:

Any other config file section:

The rawformat option --basepeak : Copy base results to peak. Sample usage scenarios: Problem: Ryan wants to leave early today. At noon, his manager tells him that he can go home after he produces reportable base and peak metrics for a system. Similar systems need about 6 hours to run 3x base and 3x peak iterations. Although Ryan borders on screaming, he quietly agrees to the task. Solution:

Warning: this works only if the basepeak option is placed in the header section. Put it anywhere else, spend more time indoors. Problem: Ryan has developed peak tuning for all but one benchmark, where the base tuning is "good enough, but noisy" -- it is subject to +/- 2% run-to-run variation, and he is tired of answering questions about it. Solution: Ryan sets 997.noisy=peak: basepeak=yes Identical results are reported for both base and peak and no questions are asked about 997.noisy. Problem: Ryan did a big reportable run and notices afterwards that base was actually better than

peak.

|

| Option | Use In | Default | Meaning |

| bind without trailing digits |

H,N | '' |

List of values to substitute for $BIND in a submit command. This can be a simple comma (or white-space) separated list, such as: bind = 0,1,2,3,4,5,6,7, 16,17,18,19,20,21,22,23 If your line is too long, don't try to continue it by adding a numeral to 'bind'; that has a different meaning, as described in the next section. Instead, you can continue it either by putting a backslash at the end of lines, or by using a here document. The above single line could equally well be expressed as: bind = <<EOT 0, 1, 2, 3, 4, 5, 6, 7, 16, 17, 18, 19, 20, 21, 22, 23 EOT The trailing comma after the "7" is both optional and harmless. It can be present or not, as you wish; in either case, the sequence of values for this example will include ...5, 6, 7, 16, 17, 18... |

| bindn (n= digits) |

H,N | '' |

List of strings to substitute for $BIND in a submit command.

Put each string on a new line, increasing the index n. Like notes, the values of the

indices are not important and are used for ordering only. If there are more copies than bind values, they will be

re-used in a circular fashion. If there are more bind values specified than copies, then only as many as needed will

be used.

$ cat bindN.cfg

copies = 4

iterations = 1

output_root = /tmp/submit

runlist = 541.leela_r

size = test

intrate:

bind0 = assign_job cpu_id=11

bind1 = assign_job cpu_id=13

bind2 = assign_job cpu_id=17

bind3 = assign_job cpu_id=19

submit = $BIND ${command}

$ runcpu --fake --config=bindN | grep '^assign' | cut -b 1-70

assign_job cpu_id=11 ../run_base_test_none.0000/leela_r_base.none test

assign_job cpu_id=13 ../run_base_test_none.0000/leela_r_base.none test

assign_job cpu_id=17 ../run_base_test_none.0000/leela_r_base.none test

assign_job cpu_id=19 ../run_base_test_none.0000/leela_r_base.none test

$(Notes about examples)

|

|---|

| Option | Use In | Default | Meaning | ||||||||

| check_version | H | no (yes for reportable runs) |

When set, before doing a reportable run, runcpu will download information about available updates from www.spec.org. In this way, you can be notified if the version of the suite that you're using is out-of-date. Setting this variable to no will disable this check. If you would like to check your version for a NON-reportable run, you will need to add --check_version to your command line. Setting check_version=yes in the config file only causes the check for reportable runs. |

||||||||

| command_add_redirect | H | no | If set, the generated ${command} will include redirection operators (stdout, stderr), which are passed along to the shell that executes the command. If this variable is not set, specinvoke does the redirection. This option is commonly used with fdo_run or submit. See Tip #3 under FDO Example 5. When used with submit, the command_add_redirect feature lets you choose whether

redirection operators (such as <this_benchmark.in or >that_benchmark.out) are

applied to your entire modified submit command (the default) or just to the portion that has ${command}.

The above is only pseudo-code; see the section on Using Submit for real examples. |

||||||||

| Option | Use In | Default | Meaning |

| copies | H,N | 1 | Number of copies. For base, the number of copies must be the same for all benchmarks, but for peak it is allowed to vary: for example, you could decide to run 64 copies of all benchmarks except 519.lbm_r, which would run only 63. Note: If you select basepeak=yes for a SPECrate benchmark, the number of copies in peak will be forced to be the same as in base. |

| current_range | H,N | none | Set the maximum current in amps that is expected to be used during the run. If you are unsure how to determine this, see the suggestions in the section on Power Measurement. The current_range can be used to control the settings across all benchmarks by putting it in the header section, or on a per benchmark level by putting it in a named section. The run rules allow setting the current range to different values for each benchmark, even in base. For example, the following is allowed. Notice that the integer rate benchmark 557.xz_r uses a different current_range than the other integer rate benchmarks. intrate=base: current_range = 3 557.xz_r=base: current_range = 4 intrate=peak: current_range = 5 557.xz_r=peak: current_range = 6 Auto-ranging: You may specify auto-ranging with the word "auto". Warning: auto-ranging is allowed only if it is not possible to set a specific range, and must be justified. If you plan to use your results in public, preserve rawfiles that demonstrate the failure. See the discussion at runrules.html#autoranging. See also the discussion of current ranges in the section on Power Measurement. |

| delay | H,N | 0 | Insert a delay of the specified number of seconds before and after benchmark execution. This delay does not contribute to the measured runtime of the benchmark. This delay is also not available in a reportable run. |

| deletework | H,N | no | If set to yes, always delete existing benchmark working directories. An extra-careful person might want to set this to ensure no unwanted leftovers from previous benchmark runs, but the tools are already trying to enforce that property. |

| difflines | H,N | 10 | Number of lines of differences to print when comparing results. |

| enable_monitor | H,N | yes | If this variable is set to no, then all of the monitoring hooks are disabled. This can be overridden by setting force_monitor. force_monitor is new with CPU 2017 |

| Option | Use In | Default | Meaning |

| env_vars or envvars |

H,N | no | If set to yes, environment settings can be changed using ENV_* options in the config file. Note that you cannot change OMP_NUM_THREADS using this feature. Use threads. |

|

Example: Consider the config file below, which creates a binary with the requested label: $ cat PerformabilityQOS.cfg runlist = 520.omnetpp_r iterations = 1 size = test tune = peak label = srini 520.omnetpp_r=peak: CXX = g++ CXX_VERSION_OPTION = -v OPTIMIZE = -O1 $ date Mon Oct 3 17:49:46 PDT 2016 $ runcpu -c PerformabilityQOS | grep -i -e error: -e success Build successes for intrate: 520.omnetpp_r(peak) Success: 1x520.omnetpp_r $ go 520.omnet exe /export/home/rc2/benchspec/CPU/520.omnetpp_r/exe $ ls -g | cut -b 27-88 Oct 3 17:52 omnetpp_r_peak.srini $(Notes about examples) The binary just above depends on certain libraries. What if they aren't available, or have been moved? $ ldd omnetpp_r_peak.srini ... version `GLIBCXX_3.4.20' not found (required by ./omnetpp_r_peak.srini) ... version `CXXABI_1.3.8' not found (required by ./omnetpp_r_peak.srini) In the config directory, a second copy of the config file has been created, with the addition of env_vars and a line that inserts a new directory into the front of the library path. As shown below, this fixes the problem. $ diff -u PerformabilityQOS.cfg PerformabilityQOS.2.cfg

--- PerformabilityQOS.cfg 2016-10-03 17:52:39.000000000 -0700

+++ PerformabilityQOS.2.cfg 2016-10-03 17:54:45.000000000 -0700

@@ -3,8 +3,10 @@

size = test

tune = peak

label = srini

+env_vars = 1

#

520.omnetpp_r=peak:

+ ENV_LD_LIBRARY_PATH = %{ENV_SPEC}/libraries:%{ENV_LD_LIBRARY_PATH}

CXX = g++

CXX_VERSION_OPTION = -v

OPTIMIZE = -O1

$ runcpu -c PerformabilityQOS | grep -i -e error: -e success

error: a total of 1 children finished with errors

$ runcpu -c PerformabilityQOS.2 | grep -i -e error: -e success

Success: 1x520.omnetpp_r

$

Notice above that the original fails, and the modifed version with env_vars succeeds. Other notes:

|

|||

| Option | Use In | Default | Meaning |

| expand_notes | H | no | If set, will expand variables in notes. This capability is limited because notes are NOT processed by specmake, so you cannot do repeated substitutions. |

| expid | H | If set to a non-empty value, will cause executables, run directories, results, and log files to be put in a subdirectory (with the same name as the value set) in their normal directories. For example, setting expid = CDS will cause benchmark binaries to end up in exe/CDS, run directories to end up in run/CDS, and results and logs in $SPEC/result/CDS. | |

| fail | H,N | no | If set, will cause a build or run to fail. |

| fail_build | H,N | 0 | If set, will cause a build to fail. For example, you could say something like this: 519.lbm_r=default: #> I am posting this config file for use by others in the #> company, but am forcing it to fail here because #> I want to force users to review this section. #> #> Once you find your way here, you should test whether #> bug report 234567 has been fixed, by using the first #> line below. If it has not been fixed, then use the #> second. In either case, you'll need to remove the #> fail_build. #> #> - Pney Guvaxre #> Boomtime, the 66th day of Confusion in the YOLD 3172 # OPTIMIZE = -Osuperduper # OPTIMIZE = -Omiddling fail_build = yes In the example above, the build is forced to fail until the user examines and modifies that section of the config file. Notice that Pney has used protected comments to cause the comments about the internal bug report to disappear if the config file were to be published as part of a reportable run. |

| fail_run | H,N | no | If set, will cause a run to fail. |

| Option | Use In | Default | Meaning |

| feedback | H,N | yes | The feedback option applies an on/off switch for the use of feedback directed optimization (FDO), without specifying how the feedback will be done.

The interaction between feedback and these other options is described in the section on Using Feedback, below. |

| flagsurl | H | none | If set, retrieve the named URL or filename and use that as the "user" flags file. If the special value "noflags" is used, runcpu will not use any file and (if formatting previously run results) will remove any stored file. Automated processing of flags is explained in flag-description.html. If you want to list more than one flagsfile, the recommended method is by using numbered continuation lines, for example: flagsurl1 = mycompiler.xml

flagsurl2 = myplatform.xml

Using other methods (such as backslash continuation) to specify multiple flags files may appear to work, but may result in unexpected differences between the original config file and the config file as written by output format config. Multiple flags files will typically be needed, because flags files are separated into two types, "compiler", and "platform". |

| force_monitor | H,N | no | If this variable is set to yes, then all of the monitoring hooks are enabled, regardless of settings that would otherwise turn them off. This means that every invocation of specinvoke will be subject to monitor_specrun_wrapper, and all command invocations will be subject to monitor_wrapper. This includes things that would normally not be subject to monitoring, such as FDO training runs, input generation commands, and commands used for validating benchmark output such as specdiff. force_monitor is new with CPU 2017. |

| Option | Use In | Default | Meaning |

| http_proxy | H | In some cases, such as when doing version checks and loading flag description files, runcpu will use HTTP to fetch a file. If you need to specify the URL of a proxy server, this is the variable to use. By default, no proxy is used. Note that this setting will override the value of the http_proxy environment variable. For example, one might set: http_proxy = http://webcache.tom.spokewrenchdad.com:8080 |

|

| http_timeout | H | 30 | This is the amount of time (in seconds) to wait while attempting to fetch a file via HTTP. If the connection cannot be established in this amount of time, the attempt will be aborted. |

| idle_current_range | H | none | Set the maximum current in amps to be measured by the power analyzer(s) for the idle power measurement. If you are unsure how to determine this, see the suggestions in the section on Power Measurement. New |

| ignore_errors | H | no | Ignore certain errors which would otherwise cause the run to stop. Very useful when debugging a new compiler and new set of options: with this option set, you'll find out about all the benchmarks that have problems, instead of only finding out about the first one. |

| ignore_sigint | H | no | Ignore SIGINT. If this is set, runcpu will attempt to continue running when you interrupt one of its child processes by pressing ^C (assuming that you have ^C mapped in the common way). Note that this does NOT cause runcpu itself to ignore SIGINT. |

| info_wrap_columns | H | 50 | When set to a value greater than 0, attempts to split non-notes informational lines such that they are no longer than info_wrap_columns columns wide. Lines are split on whitespace, and newly created lines are guaranteed to have at least the same indentation as the original line. If a line contains an item that is longer than info_wrap_columns, a warning is logged and the original line is left unchanged. |

| Option | Use In | Default | Meaning | ||||

| iterations | H | 3 | Number of iterations to run. Reportable runs require either:

Reportable runs must use 2 or 3 iterations. Here is how the settings for iterations and reportable affect each other:

|

||||

| keeptmp | H | no | Whether or not to keep various temporary files. If you leave keeptmp at its default setting, temporary files will be automatically deleted after a successful run. If not, temporary files may accumulate at a prodigious rate, and you should be prepared to clean them by hand. Temporary files include:

|

||||

| label | H | none | An arbitrary tag for executables, build directories, and run directories.

label=jun12.old.CC label=jun12.new.CC label=jun14-flagday label=jun15-jeff.wants.yet.another.test If a label is used as a section specifier, it can be referenced from the runcpu command line. runcpu --label=yusoff [...] ERROR: The label 'yusoff' defines no settings in the config file! The error can be disabled if you set allow_label_override=yes. |

||||

|

|||||||

| Option | Use In | Default | Meaning |

| line_width | H | 0 | Line wrap width for screen. If left at the default, 0, then lines will not be wrapped and may be arbitrarily long. |

| locking | H | yes | Try to use file locking to avoid race conditions, e.g. if more than one copy of runcpu is in use. Although performance tests are typically done with only one copy of runcpu active, it can be handy to run multiple copies if you are just testing for correctness, or if you are compiling the benchmarks. |

| log_line_width | H | 0 | Line wrap width for logfiles. If your editor complains about lines being too long when you look at logfiles, try setting this to some reasonable value, such as 80 or yes32. If left at the default, yes, then lines will not be wrapped and may be arbitrarily long. |

| log_timestamp | H | no | Whether or not to prepend time stamps to log file lines. |

| Option | Use In | Default | Meaning |

| mail_reports | H | all | The list of report types to mail. The format and possible values are the same as for output_format, with the addition of log, which will cause the

current log file to be sent. The default is for all files associated with the run to be mailed (so, this will include

what you listed as your desired output_format plus log (the log file) and rsf (the

rawfile). You can cut your email down to the bare essentials with something like this:

mailto=fast.guy@welovebenchmarks.org output_format=text,mail mail_reports=textIf none of the requested report types were generated, no mail will be sent. |

| mailcompress | H | no | When using the 'mail' output format, turning this on will cause the various report attachments to be compressed with gzip. |

| mailmethod | H | smtp | When using the 'mail' output format, this specifies the method that should be used to send the mail. On UNIX and

UNIX-like systems, there are three choices: 'smtp' (communicate directly with an SMTP server over the network), 'sendmail'

(try invoking sendmail directly from locations where it is commonly installed), and 'qmail' (try invoking

qmail-inject from locations where it is commonly installed). On Windows systems, only 'smtp' is available.

SMTP is the recommended setting.

Using a sendmail or qmail-inject program from a non-standard location is possible only by setting the PERL_MAILER environment variable. See the Mail::Mailer documentation for details. |

| mailport | H | 25 | When using the 'mail' output format, and when the mailmethod is 'smtp', this specifies the port to use on the mail server. The default is the standard SMTP port and should not be changed. |

| mailserver | H | 127.0.0.1 | When using the 'mail' output format, and when the mailmethod is 'smtp', this specifies the IP address or hostname of the mailserver through which to send the results. |

| mailto | H | '' | The address or addresses to which results should be sent when using the 'mail' output format. If multiple addresses are specified, they should be separated by commas or whitespace. Each address should consist only of the name@domain part (i.e. no "full name" type info). The addresses are not checked for correct formatting; if a mistake is made, the results may be sent to an unknown location. Think: comp.arch. OK, probably not there, but seriously be careful about security on this one. Config files as posted at www.spec.org/cpu2017 will not include whatever you put on this line (thus, spambots will not see the contents of this field). Note that to get your reports mailed to you, you need to specify both mail as an output_format and an address to which they should be mailed. For example: mailto=fast.guy@welovebenchmarks.org output_format=text,mail If no addresses are specified, no mail will be sent. |

| Option | Use In | Default | Meaning |

| make | H,N | specmake | Name of make executable. Note that the tools will enforce use of specmake for reportable results. |

| make_no_clobber | H,N | no | Don't delete directories when building executables. The default is no, meaning "clobber". The "yes" option, meaning "avoid clobbering", should only be used for troubleshooting a problematic compile. The tools will not allow you to use this option when building binaries for a reportable result. Note that you could issue multiple successive runcpu commands with this option set (either in the config file, or with the --make_no_clobber switch), and the build directories will be preserved. But once you remove make_no_clobber (allowing it to default back to no), then the tools will attempt a normal build with a fresh build directory. |

| makeflags | H,N | '' | Extra flags for make (such as -j). Set this to -j n where n is the number of concurrent processes to run during a build. Omitting n or setting it to zero unlimits the number of jobs that will be run in parallel. Use with care! Make flags should be used here only if you are familiar with GNU make. (The program specmake is GNU Make under another name to ensure no accidental conflicts with other Make utilities you might have. The GNU Make Manual can be consulted, and you can also say specmake --help.) Note that requesting a parallel build with makeflags = -j N causes multiple processors to be used at build time. It has no effect on whether multiple processors are used at run time, and so does not affect how you report on parallelism. |

| mean_anyway | H | no | Calculate mean even if invalid. DANGER: this will write a mean to all reports even if no valid mean can be computed (e.g. half the benchmarks failed). A mean from an invalid run is not "reportable" (that is, it cannot be represented in public as the SPEC metric). |

| minimize_builddirs | H | no | Try to keep working disk size down during builds. |

| minimize_rundirs | H | no | During a run, try to keep working disk size down. Cannot be used in a reportable run. |

| nobuild | H | no | Do not attempt to build benchmarks. Useful to prevent attempts to rebuild benchmarks that cannot be built. Also comes in handy when testing whether proposed config file options would potentially force an automatic rebuild. |

| Option | Use In | Default | Meaning |

| no_input_handler | H,N | close | Method to use to simulate an empty input. Choices are:

Normally, this option should be left at the default; it was actually added to the tools for the benefit of a different SPEC suite that needed the feature. If a reportable run for CPU 2017 uses this feature, an explanation should be provided as to why it was used. |

| no_monitor | H,N | '' | Exclude the listed workloads from monitoring via the various monitor_* hooks. |

| notes_wrap_columns | H | 0 | When set to a value greater than 0, attempts to split notes lines such that they are no longer than notes_wrap_columns columns wide. Lines are split on whitespace, and newly created lines are guaranteed to have at least the same indentation as the original line. If a line contains an item that is longer than notes_wrap_columns, a warning is logged and the original line is left unchanged. |

| notes_wrap_indent | H | ' ' | When line wrapping is enabled (see notes_wrap_columns), this is the string that will be prepended to newly created lines after the indentation from the original line is applied. The default is four spaces, but it can be set to any arbitrary string. |

| Option | Use In | Default | Meaning | |||||||||||

| output_format | H | all | Format for reports. Valid options are listed at runcpu.html under --output_format; major options include txt (ASCII text), html, pdf, and ps. You might prefer to set this to txt if you're going to be doing lots of runs, and only create the pretty reports at the end of the series. See also the information in runcpu.html about --rawformat. | |||||||||||

| output_root | H |

If set to a non-empty value, all output files will be rooted under

the named directory, instead of under $SPEC (or %SPEC%).

You can navigate a rooted directory with ogo.

|

||||||||||||

| ||||||||||||||

| Option | Use In | Default | Meaning |

| parallel_test | H | #base copies |

For reportable runs, the tools verify that benchmark binaries get the correct answer for the test and train workloads. The time required for such verification does not count toward the reported score, but does add to the cost of benchmarking. To reduce that cost, you can run multiple tests simultaneously, by setting this option to anything higher than 1. For SPECrate runs, the default is the number of base copies. For SPECspeed runs, the default is 1, that is, effectively off. Note that If you turn parallel_test on for SPECspeed, the number of threads is silently forced to 1 during parallel testing. This is done in order to prevent accidental system overload. For non-reportable runs, parallel testing is disabled, unless you turn it on by setting parallel_test_workloads to one or more of "test" "train", or "ref". If the feature is enabled, and if parallel_test_submit is also set, the settings for submit and bind will be used to distribute jobs if they're set. Notes:

The above notes apply to all the parallel_test switches. |

| parallel_test_submit | H | no | Whether or not to use your submit and bind settings when doing parallel_test. When you specify parallel_test > 1, by default your submit and bind settings are not applied, because typically the operating system's default policies can be trusted to do an adequate job scheduling this (non-timed) work. Notes: See notes under parallel_test, above. |

| parallel_test_workloads | H | no | Which workload classes to run in parallel for non-reportable runs when parallel_test is enabled. The parallel_test_workloads feature is new with CPU 2017. Notes: See notes under parallel_test, above. |

| Option | Use In | Default | Meaning | ||

| plain_train | H,N | yes | When set to yes (or true or 1), does not apply any submit commands to the feedback training run. It also causes the monitor_* hooks to be ignored for the feedback training run. | ||

| power | H | no |

Enable/disable the optional power measurement mode.

New

After those fields have been set, then:

See the section on Power Measurement, below. |

||

| power_analyzer | H | none |

Network location (name and port) for the power analyzer to be used by the SPEC Power/Temperature

Daemon (PTD).

New

If you are using more than one power analyzer, separate them by commas.

The power_analyzer field tells the run time software where to find the

analyzer. You also need to tell humans about your analyzer, using descriptive fields with matching {id}

names, for example:

power_analyzer = hex-analyzer001:8888 hw_power_hexanalyzer0018888_cal_date = 3-Nov-2018 hw_power_hexanalyzer0018888_connection = Serial over USB See the section on Power Measurement, below. |

||

| preenv or pre_env |

H | yes | Use preENV_ lines in the config file. When this option is set (the default), lines of the form preENV_<variable> = <value> will cause runcpu to set the specified environment variable to value and re-exec runcpu to perform the run. The restart is done in order to ensure that the entire run takes place with the new settings. You can set preENV_SOME_VARIABLE = value only in the header section or a section using one these benchmark specifiers: default: intrate: fprate: intspeed: fpspeed: Any attempt to use preENV_ in other sections is silently

ignored.

Example: Commonly, SPECspeed2017 Floating Point users will set a large stack for 627.cam4_s. You can do this by adding a line similar to this one: preENV_OMP_STACKSIZE = 120M to the header section (top) of your config file, or by adding a new section for fpspeed: fpspeed: preENV_OMP_STACKSIZE = 120M The exact size needed will vary depending on your operating system and compiler. You might need to adjust it. See also the Examples, in your installed SPEC CPU 2017 tree, in the config directory.

|

| Option | Use In | Default | Meaning |

| rebuild | H | no | Rebuild binaries even if they exist. |

| reportable | H | yes | Strictly follow reporting rules, to the extent that it is practical to enforce them by automated means. The tester remains responsible for ensuring that the runs are rule compliant. You must set reportable to generate a valid run suitable for publication and/or submission to SPEC. Reportable runs must use 2 or 3 iterations. Here is how the settings for iterations and reportable affect each other:

|

| runlist | H | none | Benchmarks or sets to run. Names can be abbreviated, just as on the command line. See the long discussion of run order in runcpu.html. |

| save_build_files | H,N | none | After a build is finished, files matching any of the space-delimited wildcard patterns in this variable will be gathered up and saved. When a non-reportable run is being set up, those files will be unpacked into the run directory when the executable is copied in. The auxiliary file package is ignored when setting up reportable runs. The save_build_files feature is new with CPU 2017. |

| section_specifier_fatal | H | yes | While parsing the config file, if a section specifier is found that refers to an unknown benchmark or benchset, an error is output and the run stops. Set section_specifier_fatal=no in the header section of your config file to convert this error into a warning and allow the run to continue. The ability to convert section specifier errors into warnings is probably of use only for benchmark developers. |

| Option | Use In | Default | Meaning |

| setprocgroup | H | yes | Set the process group. On Unix-like systems, improves the chances that ^C gets the whole run, not just one of the children. |

| size | H | ref | Size of input set: test, train, or ref

You might choose to use runcpu --size=test while debugging a new set of compilation options. Reportable runs automatically invoke all three sizes: they ensure that your binaries can produce correct results with the test and train workloads and then run the ref workload either 2 or 3 times for the actual measurements. Caution: When requesting workloads, you should use only the terms test, train, or ref. You should stop reading here. Still reading? Sigh. OK, here are the possibilities.

|

| Option | Use In | Default | Meaning |

| src.alt | N | none | Name of a SPEC-approved alternate source.

|

About alternate sources: Sometimes a portability issue may require use of different source code for a benchmark, and SPEC may issue a src.alt, which is a compressed tar file containing modifications, created by makesrcalt. To use a src.alt, see the instructions posted with it at

www.spec.org/cpu2017/src.alt.

$ cd $SPEC # or on Microsoft Windows: cd %SPEC% $ specxz -dc nnn.benchmark.FixMumble.tar.xz | spectar -xvf - $ cat README.nnn.benchmark.src.alt.FixMumble.txt The README will explain what to add to your config file and any other instructions that are needed. After you unpack it, a directory is created under under <benchmark>/src/src.alt/ and a set of patches are stored there. You can look at the patches using dumpsrcalt, but it may be easier to just apply the src.alt and look at a build directory. Example: This config file builds with or without a src.alt, depending on the runcpu setting for the --label. $ cat testme.cfg action = buildsetup runlist = nnn.benchmark tune = base default: CC = gcc CC_VERSION_OPTION = -v nnn.benchmark=base=without: OPTIMIZE = -O2 nnn.benchmark=base=with: OPTIMIZE = -O2 srcalt = FixMumble To populate build directories: runcpu --label=without --config=testme runcpu --label=with --config=testme Then, if you visit benchspec/CPU/nnn.benchmark/build you can compare the directories. |

|||

| Option | Use In | Default | Meaning |

| strict_rundir_verify | H | yes | When set, the tools will verify that the file contents in existing run directories match the expected checksums. Normally, this should always be on, and reportable runs will force it to be on. Turning it off might make the setup phase go a little faster while you are tuning the benchmarks. Developer notes: setting strict_rundir_verify=no might be useful when prototyping a change to a workload or testing the effect of differing workloads. Note, though, that once you start changing your installed tree for such purposes it is easy to get lost; you might as well keep a pristine tree without modifications, and use a second tree that you convert_to_development. |