Google AI Edge

On-device AI for mobile, web, and embedded applications

On-device solutions from models to pipelines

Accelerate ML deployment, optimize pipelines, and easily access powerful LLMs

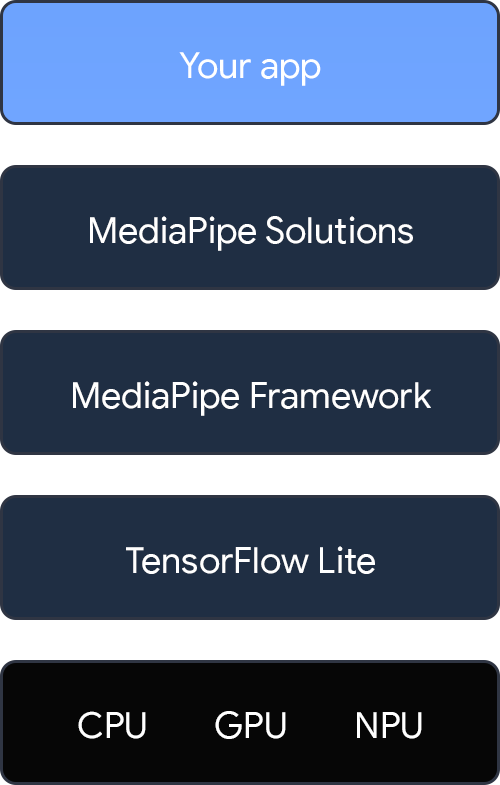

An end-to-end stack featuring both high and low level components

What's new from I/O?

MediaPipe

Easily create innovative on-device ML solutions

Solve common challenges with MediaPipe

TensorFlow Lite

A lightweight, multi-framework library for deploying models on mobile, web, and microcontrollers.

Generative AI, running on-device

MediaPipe LLM Inference API

Run LLMs completely on-device and perform a wide range of tasks, such as generating text, retrieving information in natural language form, and summarizing documents. The API provides built-in support for multiple text-to-text large language models, so you can apply the latest on-device generative AI models to your apps and products. Learn more

Torch Generative API

Author high performance LLMs in PyTorch, then convert them to run on-device using the TensorFlow Lite (TFLite) runtime. Learn more.

Gemini Nano

Access our most efficient Gemini model for on-device tasks via Android AICore. Coming soon to Chrome.

Why deploy ML on edge devices?

Latency

Skip the server round trip for easy, fast, real-time media processing.

Privacy

Perform inference locally, without sensitive data leaving the device.

Cost

Use on-device compute resources and save on server costs.

Offline availability

No network connection, no problem.