-

Notifications

You must be signed in to change notification settings - Fork 5

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Bounce Tracking Protection #6

Comments

|

Some context, we did some measurement of this ~1 yr ago. https://brave.com/redirection-based-tracking/ I'd be very interested in other numbers folks might be have, especially as it might help us understand how this risk compares against other risks on the platform. (not that we shouldn't trace down every leak in the platform, but interested in the highest-marginal-benefit ranking) |

|

To highlight a similar mechanism for completeness, sorry if it's documented elsewhere and not considered bounce tracking:

|

Interesting. Have you seen this in the wild for tracking purposes? I wouldn't call it bounce tracking, rather insta-popup tracking or brief popup tracking. Maybe file as individual issue? I'd love to hash it out. |

|

We should definitely defend against this kind of pop-up based tracking if it's being exploited. (Unfortunately, it sounds rather similar to pop-up based OAuth flows.) |

This is cool data! I had a hard time figuring out how prevalent bounce tracking is from that post, or in particular the subset we are calling bounce tracking. Bounce tracking is a subset of first-party redirect tracking (which also includes things like URL shorteners, or sites that send all outgoing links through a redirect) which in turn is a subset of redirect tracking (which can include redirects in third-party context). But I couldn't even figure out how prevalent redirect tracking in general is. Help appreciated! As mentioned by John, we know that at least one significant tracking firm was providing bounce tracking for Safari users across a number of sites, before ITP rendered it ineffective. We don't know current prevalence though. |

|

@othermaciej thanks! We used the measurements to estimate how often users would hit bounce trackers, using a bunch of random walks of the web, weighted by initial website popularity. Not perfect of course, but a useful first cut. Our initial plan was to use the above to identify bounce tracking domains (e.g. domains that have storage read or written to, but were only intermediate in some bounce). E.g.

the "2"s in the above are what got counted in the research behind the blog post, and what we started building lists of. The short term plan was to just block storage on these domains, even in 1p context, until there was a user gesture. We had some more ideas too that were promising, but have (so far) been triaged down, since we didn't think this was where the best "privacy bang for the buck was", but the partial list includes:

(all "white boarding stage stuff", but where our mind was at at the time) |

|

Thanks for publishing this proposal! I would like to point out that adding on-device based models could potentially harm privacy and security of users so I think that the crawlers-based approaches that you suggest should be preferred. |

Hi! We are well aware of that research. However, it's the observability of global state that's problematic, not the global state itself. And when looking at the observability you have to weigh it against just letting a tracking vector exist. Often you'll find that removing the tracking vector does more for privacy than avoiding the observability of global state. Ideally, you have neither. For browsers without comprehensive tracking prevention on by default, cookies, web storage, and HTTP cache are readily available global state that's observable cross-site. I.e. that's where you have to start if observability of global state is something you want to defend against. |

Distinguishing legitimate federated sign-on scenarios and legitimate analytics and affiliate scenarios from permissionless bounce tracking seems quite hard. As it is, the "user interaction" signal currently in ITP seems likely to have both false positives and false negatives with the consequence of making it harder for users to stay logged in to authentication providers they care about. As @othermaciej points out

|

Observing the side effects that you mentioned in the proposal would be trivial, that's why I proposed to go for the shared state across all users. Since we are trying to address the problem let's try to tackle it once and for good. I feel like moving the tracking capability to a different vector would be less beneficial than removing it completely. (I think that if there is a local model that changes navigation behavior it will probably always be observable) IMO tracking users based on a list shared across millions of them would likely be more complicated.

I agree something has to be done but not at the risk of harming security. This is not a situation in which the worst case is an ineffective mitigation like it would be for a poorly configured CSP, this is a case similar to the XSS auditor, which introduced XSLeaks in almost every website in existence for a debatable advantage over XSS. Overall, I think the crawler solution would be more solid, I'm not against this proposal, jut here vouching for one of the options you put out 😸 |

|

Crawler-based classification has holes too, there may be trackers not detectable from the network position of the crawlers but that are detectable for a given user. (Due to geospecific redirects or even trackers identifying the IP block of the crawlers). Going back to classification In this case, let's consider the proposal with single bit of "classified as a bounce tracker" that puts a site into SameSite=Strict jail. This is pretty hard to abuse. It's detectable only during an actual attempt at bounce tracking, and combining the bits into a usable unique ID requires bouncing through many (32ish) distinct domains every time serially, which is likely to be a prohibitive performance cost. And that bouncing will itself cause all of them to be identified as bounce trackers, so IDs of this form will self-destruct after only a few uses at most. Capping the length of redirect chains is also likely to be web compatible, at a level lower than what would be needed to pull this off. Let me know if there's a mistake in this analysis. That said, a combo of crawling and client-side detection may be the right balance. |

|

@snyderp, would it be fair to conclude from your comments on this issue that Brave would like to see this proposal taken up by the CG? |

|

I'm not sure I understand what the specific proposal is, but I'm very in favor of PrivacyCG working on this problem! :) |

We wanted to share this at an earlier stage than a crisp proposal to see if we can have this community group work its way through the issue and land in a shared solution. So the proposal is to take on the work of defining the vulnerability and enumerating defenses we believe work, possibly settling on a single one. The outcome could be standards language which conveys that user agents may put restrictions A, B, and C in place to defend against attack X. |

|

Ah, then if the proposal is "lets put our brains together and figure out a good, standards-focused, cross browser solution to this problem" then brave is 100% on board (didn't mean to be a pedant, just didn't know if the proposal was substantive or procedural) |

|

This may need to be written up as an explainer about the problem and the solution space before we start drafting in spec-level detail |

|

Would you like time on the next call to talk about this proposal? |

|

I'd hope it's not a broad measurement like periodic purge of cookies for websites without user interaction tools like A/B testing for improvements of user interface (of one organization) already have a hard time with ITP and I hope solutions can be focused on the offenders and less or "everyone" |

If you're asking me, then yes, I can talk a bit about it on the next call. |

|

I agree this does sound remarkably similar to OAuth flows, which I think we would want to keep generally, though I can see an argument that we might consider removing this behavior is a reasonable push towards proposals like the Trust Token API, though that's a long-term plan. Is the intent here to whitelist specific known-to-be-for-oauth domains? How are people who are attempting currently to block this behavior handling OAuth? |

|

Are folks interested in having an ad-hoc meeting to discuss this? cc @johnwilander @jackfrankland @pes10k @englehardt @othermaciej @AramZS If so, please file an issue here. |

|

I would be interested in attending a call on it |

|

@johnwilander are you up for leading this discussion in an ad-hoc meeting? |

|

Sure, I can lead the discussion. Would love help on scribing though. |

|

Thanks @johnwilander! We'll start working on scheduling this. |

|

I watched the entire event and I don't recall anything even remotely resembling a mention of Safari tracking. That would be major news that happens to be right in my area of research. You seem to be drawing a highly contrived conclusion tying a specific set of dark patterns that apps may use, then noting that Safari is an app, and from there concluding that they're doing it. The idea that the FTC is not providing proper transcripts is not serious. Regarding what users want, the situation is pretty clear. I'm citing a study by an established and highly trustworthy official statistics agency that asked users whether they want their browser to prevent their data from being shared: they do. You're citing a lobbying group that put a completely disingenuous false dichotomy in front of users — do you want to do it the IAB way OR do you want everything to become expensive? Let's not pretend that there's any kind of equivalence here. You're confusing "control" and "being forced to deal with it". Having my browser match my expectation is greater control precisely because I never have to deal with it. We have a great mechanism to address niche needs: browser extensions. For the small minority of users who do want their browser sharing data, that's a perfect way to enable this. A few clicks to install and they can share their data whichever way they want to. Anyway — I just came here to support this work. Support it I do. |

|

@johnwilander I feel this has become contentious, and that you do not plan to explain how my comments are out of scope. I think it would be best if we took a break for the moment. @darobin I see and respect your support. I am afraid much of what you object to that I say is not of my making, however, rather it is the process of the w3 that I am trying my best to follow, to give examples: You are right I am speaking of may, and there is a good reason. may over does is the threat model dictated by most proposals that are being discussed here at the moment and I am using their methodology; it is not one I chose. may is considered a threat even if it doesn't necessarily happen, or happen today. This is why I only go so far as to indicate may. Also I think before you posted I did re-read the transcript and modify my post (clearly marked what was changed) as you were in some ways correct but in some ways I believe mistaken. I also had watched that segment. browser extensions to share data in this way would also not be allowed under some theories of privacy, namely the one being discussed here, as they seek to eliminate tracking even with consent and make it 100% impossible. Additionally, I don't think its fair for most users to know about the threat of pay-wall and walled gardens if they are not told. I didn't even know. You criticize the iab quite harshly, but I have read other articles as well and this was the one I had on hand in a slack post from a colleague. |

|

Browsers are on a path to eliminate vectors for cross-site tracking. The Privacy CG's Storage Partitioning Work Item is an example of the community working to identify and define current vectors that enable such activity so that browsers can directly address them. Bounce tracking is another vector that can currently be used for passive cross-site tracking of users, so it's appropriate to discuss what undesirable primitives it enables and, where the primitives run counter to the stated user privacy-protecting goals of many user agents, discuss possible solutions to solve them. There are many existing solutions that build on top of browser APIs in ways that may no longer be viable as browsers work together to evolve the web platform's design to protect users by default. This reality is why new APIs which aim to solve existing use cases while aligning with the privacy-protecting-by-default platform are being proposed and discussed. We should focus our conversation for this issue on what technical mechanisms are viable to address the non-privacy-preserving aspects of bounce tracking. For use cases and scenarios that may be impacted by this work, I would recommend interested parties evaluate making separate proposals outside of this issue for new, privacy-preserving mechanisms to support those scenarios; this will enable those proposals to have their merits discussed in a more dedicated and intentional way. |

|

@TheMaskMaker Sorry but I don't understand what you are trying to say about the W3C process. If you have a specific process-related point, please cite the relevant Process document section. This is not about "theories of privacy". It's an established fact that interruptive prompting for data, like SWAN does, does not lead to people making informed decisions. Preventing such tricks from being used aligns with user interest. If some people want to go out of their way to install an extension, then we can talk about informed consent. It's also a more sustainable solution than the kind of deliberate circumvention of defensive measures that SWAN is built on. And the alternative to a data free-for-all isn't paywalls, it's a healthier ad ecosystem less dominated by the big tracking companies. |

|

@darobin Are you stating that most people cannot make informed decisions, hence we should remove choice? Or rather, that data collection and processing of personal data is a complex topic that most people do not spend time to educate themselves about and hence it is too difficult for non-technical audiences to understand the choice being prompted? Big "tracking" companies often track on user behavior on their own properties, which ought to raise similar concerns about people understanding what data is collected and how it is processed. I would love to hear your proposal on how small publishers or new startups ought to be able to compete against larger, established companies, when both personalization and advertising use cases often benefit from having access to greater amounts of input data (even when such data is deidentified)? |

Of course we can discuss the legal aspects of privacy, probably should, but we should not pretend any technology that emerges, even from a standards body, "establishes a legal basis for data processing among controllers". Only legal process can do that. Techniques to protect privacy are clearly in scope, but the main thrust of SWAN seems to be to bypass existing protections. |

|

I was recently point to this a case study titled The Promise and Shortcomings of Privacy Multistakeholder Policymaking which covers W3C and DNT. I’m trying to learn from this and urge others to read it as well. @michael-oneill My reference to establishing a “legal basis” is to the contractual framework for data sharing created under SWAN.community (SWAN.co) model terms to ensure all parties play by the same rules. It is not a reference to a “lawful basis” under the UK/EU GDPR. Data protection regulators in particular encourage controllers to put in place data sharing agreements to provide certainty and clarity regarding each party’s obligations and in this way SWAN.co’s model terms support (not replace) controllers’ direct obligations under the law. Preferences in SWAN are designed to be based on the lawful basis of consent for UK/EU GDPR purposes. There is an ongoing debate (even amongst regulators) as to whether certain “subsequent processing” of cookie data can be based on legitimate interests e.g. for measurement purposes. SWAN.co can support the optionality for both bases but challenges the prevailing view that individuating purposes of use into several technical use cases achieves the “informed” consent standard demanded by the UK/EU GDPR. Several studies indicate users are not engaging effectively with the current typical arrangements, to the detriment of their privacy. SWAN’s starting position is that different types of cookies are neither “good” or “bad” per se. Used responsibly, and with the individual end user’s meaningful consent, they have an important part to play in the preservation of the Open Web and the mission of the W3C. As the TAG recognized recently in their First Party Sets report, there is a conflict of interest where a browser is owned and operated for the owner’s commercial interests not the end user interests. This is noted to be contrary to the WC3’s design principles – see Put user needs first (Priority of Constituencies). It is also worth reflecting on the W3C Design Principles that should be in line with the objectives of privacy protection where users are given a meaningful choice:

SWAN.co provides a legal framework to achieve the goals of all good actors who seek to provide people with choice, meaningful alternatives to choose from and hence enable them to reserve their privacy. The position taken by SWAN.co mirrors that of the UK’s ICO and CMA. It is essential that this group can debate technical, legal and economic issues if fully thought through solutions to problems are to the found.

The main thrust of SWAN.co is to improve people’s privacy and provide them with choice on the web which I believe is the focus of this group and the W3C. What are the “existing protections”? Is this a reference to existing ways that browsers are protected in becoming enhanced controllers rather than user agents? SWAN.co’s initial technical implementation does not bypass anything. I’m not even sure how one would bypass computer code implemented in a browser. It builds on long established standards of interoperability to share data among legally bound data controllers and processors in support of legal business operations. Other commentators such as @TheMaskMaker acknowledge this.

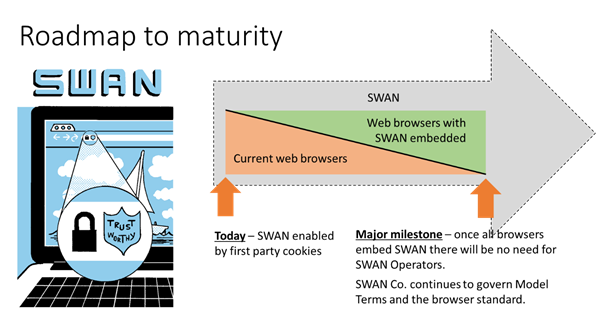

@johnwilander Could you provide a link to information that this issue is trying to solve and the problems you see which require “bounce tracking protection”? This might then help us establish use cases that will be impacted. It is the intention of SWAN.co to make a proposal to establish data sharing within the web browser based on data controller and processor relationships in the future. See the following slide from the presentation provided to the Improving Web Advertising (IWA) BG on SWAN.co in April. Privacy by design principles have been followed including the use of pseudo anonymous identifiers that support the right to be forgotten. Based on recent TAG reports on First Party Sets (FPS) such a proposal will require the security architecture of the web to evolve to recognize the legal relationship between controllers and processors rather than just domain = single data controller. Google and others who support FPS all appear to be seeking a similar change. Perhaps @hober who chairs this group and is a TAG member could comment on current TAG thinking in this regard? Has thinking evolved since your blog of October 2020? Given my experiences of the W3C it seems like the required work from TAG, then the subsequent proposals, debates, trials and then mass deployment will take at least 24 months. The publishing and advertising industry and their suppliers are working towards an effective deadline of October 2021 to no longer rely on so called third-party cookies. If they cannot find a solution open web publishers will be further threatened as advertisers direct their spend to more stable, less risky and high ROI alternatives. This is explained in the CMA report I referenced earlier. Solutions are needed that will work today at scale. It would be helpful if Apple can confirm that they will not interfere with the long-established primitives defined in long established technical standards that support the legally compliant SWAN solution until such time a viable alternative is defined and widely deployed? Perhaps representatives from other browsers could also provide similar assurances to enable a rationale and productive debate to occur? No one responds well when the industry they work in or support is being threatened without a rational or legal basis and they are being discriminated against based on the actions of a minority.

I’m confused by this comment. SWAN.co interrupts the user once and so long as the data is retained by the web browser it will not do so again until 90 days have elapsed. People can update their preferences at any time, or using private mode wipe them when the browser closes. Where are the “tricks”? I see none. Compared to current practices that lead to “consent fatigue” SWAN.co seeks to avoid interrupting users every time they visit a website and explaining complex laws and many data controllers, processors and purposes; SWAN.co’s approach seems preferable. No one is forcing you or any publisher or advertiser to use SWAN.co. It is important to ensure those that wish to use SWAN.co can do so freely. Without SWAN.co the alternative will be for website operators to ask people to provide directly identifiable personal information, such as email addresses or logins, every time they visit a web site. Not all website operators will have the scale or brand presence for such a solution to be remotely viable for them. In any case I don’t understand how that will yield a privacy improvement in practice. Until an alternative is found SWAN.co, or a solution like it, will be needed by many participants in the web eco system. @TheMaskMaker you make some interesting points. Competition issues in digital markets and self preferencing are all related to the W3C antitrust guidelines. I would like these urgently updated to protect participants in debates such as this one. I have raised an issue in relation to the W3C process which is currently with the W3C AB. You might wish to direct your comments to that issue. I agree with @johnwilander that we should focus this thread on the issue of so-called bounce tracking and the impact of any changes. |

|

I am stating that we should not encourage choice architectures that work against users. This isn't new, it's been a constant area of improvement for the Web over the past two decades, going back to the ActiveX security model that gave users the "choice" to install viruses from the Web. The goal is not "choice", the goal is increased user agency. Choice will often decrease agency, particularly when it is interruptive or decontextualised — exactly as it is with SWAN (and making that a sticky decision makes it worse, since a decision made under a bad architecture is thus rendered persistent). Consent is useful where appropriate, but it should be rare, slow, difficult, specific, and very temporary. The typical case is consent to the processing of sensitive information provided as part of the collection form, and only for a specific case. Consent to persistent, non-specific, pervasive, and durable processing does not meet these criteria. Yes, big tracking companies are a problem. It's not a problem that will be solved by maintaining the same status quo that brought them into existence. "Let's do more of exactly the same" does not strike me as a particularly promising plan. I don't have a magic pony for "how small publishers or new startups ought to be able to compete against larger, established companies" for the very simple reason that no one does. But I do know that we can make the market support more competition or less competition. There are network effects in the use-value of data such that sharing it more disproportionally favours those who already have data. Today a small publisher (and we are all small publishers in the current game) is devalued because it cannot develop scarcity for its audience. We gave your way a try — it really, really failed. It's time to change that. |

|

When a user intentionally clicks a link to view content on a different site, the browser should of course allow top-level navigation to occur. If the user has visited the site they're navigating to before, and, depending on the jurisdiction, has consented to being remembered, it seems reasonable for the site to access data related to the previous visit before displaying meaningful content to the user. The ability to access storage data partitioned to the origin/site upon top-level navigation is required for this flow. Use of this mechanism for anything else, I believe, is an unintended side-effect of the functionality. There's especially a strong argument for user agents to consider bounce-tracking, or the top-level redirection through several domains that display no meaningful content for the purpose of data collection, an exploit, no matter how good the intentions are. |

|

Hi everyone-- one of the Privacy CG chairs here. As mentioned in my last comment, this issue is dedicated to discussion on in what ways bounce tracking allows passive cross-site communication and potential technical solutions for addressing it. At this point, we're veering way off topic. These side conversations are all reasonable to have in other forums, but not on this issue specifically. We'll unlock this issue early next week after which point we hope to see folks respect the request to remain on topic. If you have concerns, please reach out to the Privacy CG chairs at group-privacycg-chairs@w3.org. Thanks! |

A Defence Against Bounce TrackingWhile it may be possible for user-agents to recognise bounce-tracking, generally the use of redirection to hide cross-domain storage access, and block the servers that use it, the "arms-race" is bound to continue. Determined trackers will develop more obscure and sophisticated techniques and, while browser companies may be prepared to continue developing defenses, the resulting side-effects can sometimes end up damaging the web platform for the wider ecosystem. There needs to be a simple and more direct way to ensure users are protected from cross-domain tracking. One way to do this, as pointed out at the top of this thread, is to purge all a top-level site's data, both data local to the top-level origin and partitioned to its embedded subresources, after the user stops interacting with the site. Since no state information is persisted outside the expiry "window", all tracking techniques based on client-storage would be impossible. Fingerprinting attacks may still be possible of course, but that is another story, and defenses are already being built up against that. There already exists an API, Clear-Site-Data, whereby servers can request via a response header that all their client-side data is deleted, and user-agents could simply arrange to execute this action some default time after the user stops interacting with a site. A timer would be (re)started on every user interaction, and data purged on the next visit after it completed, as if the browser had received a Clear-Site-Data header on the next access to the top-level origin. All the client-accessable site data can be removed this way, cookies, local and session storage, indexedDB storage, execution contexts and cache. Sites could then request that the browser extends the default timeout, which it would only do after prompting the user for consent. The default duration should be a value low enough to make the persisted state inadequate for tracking, but high enough to allow inter-site navigation and persist other expected state for average user interaction within a single "session". Sites with functionality requiring top-level state to be persisted for longer would be able call for it using a suitably extended version of the Storage Access API As in the case of embedded browsing contexts requesting access to their own top-level data, the user-agent would prompt the user and ask for their consent before implementing the new duration. An argument against implementing a default restriction on first-party storage like this would of course be the risk of breaking existing functionality, but a transition could be managed by only triggering the new limit on first-party storage when a A useful amelioration would be to allow a longer persistence for defined low-entropy "session" state data, which could be used to stop the rendering of "cookie consent" or other banners or popups once a user had rejected them. This would have to be carefully designed to avoid it being employed as a persistent tracker, for example in could be a single named cookie with an entropy-restricted value and a moderately short duration, which would then not ne subject to the default data purge action. An example could be: There could only be one of these cookies per origin because of the risk of carrying high-entropy values in a combined set of low-entropy names. Other exemptions for storage not to be subject to the purge might be added so necessary, but privacy-preserving, functionality might be retained - always with the proviso of course that they were not capable of being co-opted by "bad actors" for tracking. |

|

In preparation for the F2F discussion I'm trying to find information on the problem that is attempting to be solved in this issue. @johnwilander please could you provide a link to this information? |

The technical description of the problem in its original form is in the issue post under “Bounce Tracking.” If you are looking for a description of what cross-site tracking is, that’s out of scope for this issue but the threat model work in PING may be able to help you. If you are looking for information on why browser vendors want to prevent cross-site tracking, I’ll have to defer to each vendor’s tracking prevention policy. |

|

I think you are referring to this privacy threat model document. Correct? The document is labelled as follows.

I was hoping for concrete details of actual harms that you are seeking to address with this issue and who the perpetrators of those harms are. Does such support exist? I see the start of a pull request to outline the different positions. Perhaps as a next step this could be expanded to outline the specific harms and actors in relation to bounce tracking? In relation browser vendor's policies, I don't see how they are relevant to this group or this issue. We're focused on improving privacy in practice not coordinating the implementation of various web gatekeepers commercial policies. |

The issue does not need to state "actual harms." There are browser vendors who want to prevent cross-site tracking and bounce tracking is an instance of cross-site tracking. Hence, it's useful to discuss if and how we can standardize prevention of bounce tracking. If you don't want to discuss how to prevent bounce tracking but rather discuss something else, I suggest you open a different issue in a suitable group within W3C. This is a work item proposal to jointly work on how to prevent bounce tracking. |

|

The charter of this group states the following as the mission.

As someone who is part of three of these four participation groups, I’m keen to understand how this proposal, like any other proposal in Privacy CG, improves user’s privacy in practice.

Those of us that are not browser vendors need to know very clearly why browsers want to prevent cross site tracking? We need to understand justifications beyond individual corporate policies or nebulous references to privacy so that this issue can be debated rationally by all four participating groups. However, if this group is in fact a forum for web browser vendors to co-ordinate their changes then as a minimum the charter should be revised. |

|

@michael-oneill I may have misunderstood the proposal but it seems like this has the substantial potential to severely break most identity single-sign-on flows. For example, both SAML and OpenID Connect use redirect mechanisms with link decoration to support single-sign-on across disparate domains. These flows can contain explicit user behavior (i.e. consent messages) but can also be silent (e.g. the user has already given consent to share their identity between the IDP and the site). If I understand the proposal correctly, then it would be easy for the IDP to be classified as a "bounce tracker" and all the user state cleared from the IDP site. This would not only force the user to login much more frequently creating a poor user experience but it would exacerbate it by removing all trust the IDP has with the browser and the user forcing the user's sign-in experience to be even more complicated (e.g. password + SMS verification). The reason is that IDPs today store some state in the browser instance regarding userX signed in to the IDP. This then enables the IDP to assert some level of trust to the browser instance. If all such state is removed, then the IDP MUST treat the sign-in attempt as "un-trusted" and use the more complicated user authentication flows. Are there provisions in the proposal to support identity providers? Or do you have any specific recommendations? |

|

@gffletch the data purged by my proposal would only be top-level + partitioned, the non-partitioned subresource data would not be affected. The existing Storage Access API could still be used to let SSO subresources see their (first-party) cookies etc. |

|

First, @michael-oneill and @gffletch, what you are discussing is already an active topic in the IsLoggedIn work item. See https://github.com/privacycg/is-logged-in#why-do-browsers-need-to-know and privacycg/is-logged-in#15. Second, there is no difference between first-party cookies and the cookies a third-party gets access to though the Storage Access API. So I don't understand the distinction you make between "top-level" and "non-partitioned" data, Michael. Are you suggesting data is hidden from the first-party and only accessible when a third-party requests it through the Storage Access API? |

|

Thanks John. By "top-level" I mean storage accessable to the top-level frame, e.g. the sites own first party cookies and everyting controllable by Clear-Site-Data,. |

|

Hi there. I hope it's OK to chime in here. How about some sort of way of notifying users that bounce tracking is occurring? It wouldn't even need to require user interaction to allow (although perhaps that could be configurable), just some way to tell people it's going on and the option to proactively block sites if wanted. I think in the case of SSO authentication, this wouldn't need to happen often so the notifications wouldn't be too annoying. Perhaps there could be an option for the user to say "this is OK from that site". In other cases, especially when it's happening a lot, informing the user would at least tell them something weird's happening. Detection of all cases would be easier than trying to selectively block good and bad cases. It doesn't "solve" the issue but it goes some way towards giving the end user more awareness. |

|

There are cases where I want to redirect to a new URL due to service termination or service integration with another company. This is a legitimate redirect use case that does not involve tracking. However, in the case of a company that operates many services using subdomains, the number of redirects from the same domain will be large, and the company will be limited by the bounce tracking protection even though it is not tracking. In portal sites, there are often domain moves of services. The redirector count of 10 times poses a risk, and I think we need a method to detect legitimate uses other than ad hoc. So, we would like to ask that redirects that do not involve tracking should not be counted as part of the bounce tracking protection. For example, the following.

|

|

@johnwilander is everything in this proposal captured by the Navigational-Tracking Mitigations work item or is there something separate here that we should continue to discuss via this proposal? |

In the spirit of a community group, we’d like to share some of our Intelligent Tracking Prevention (ITP) research and see if cooperation can get us all to better tracking prevention for a problem we call bounce tracking.

Safari’s Old Cookie Policy

The original Safari default cookie policy, circa 2003, was this: Cookies may not be set in a third-party context unless the domain already has a cookie set in a first-party context. This effectively meant you had to “seed” your cookie jar as first party.

Bounce Tracking

When working on what became ITP, our research found that trackers were bypassing the third-party cookie policy through a pattern we call "bounce tracking" or "redirect tracking." Here's how it works:

Modern tracking prevention features generally block both reading and writing cookies in third-party contexts for domains believed to be trackers. However, it's easy to modify bounce tracking to circumvent such tracking prevention. Step 5 simply needs to pass the cookie value in a URL parameter, and step 6 can stash it in first-party storage on the landing page.

Bounce tracking is also hard to defend against since at the time of the request, the browser doesn’t know if it’ll be redirected.

Safari’s Current Defense Against Bounce Tracking

ITP defends against bounce tracking by periodically purging storage for classified domains that the user doesn’t interact with. Doing navigational redirection is one of the conditions that can get a domain classified by ITP so being a “pure bounce tracker” that never shows up in a third-party context does not suffice to avoid classification. The remaining issue is potential bounce tracking by sites that do not get their storage purged, for instance due to the fact that the user is logged in to the site and uses it.

Can Privacy CG Find a Comprehensive Defense?

We believe other browsers with tracking prevention have no defense against bounce tracking (please correct if this is inaccurate) and it seems likely that bounce tracking is in active use. Because we've described bounce tracking publicly before, we don't consider the details in this issue to be a new privacy vulnerability disclosure. But we'd like the Privacy CG to define some kind of defense.

Here are a few ideas to get us started:

The text was updated successfully, but these errors were encountered: